Today's Overview

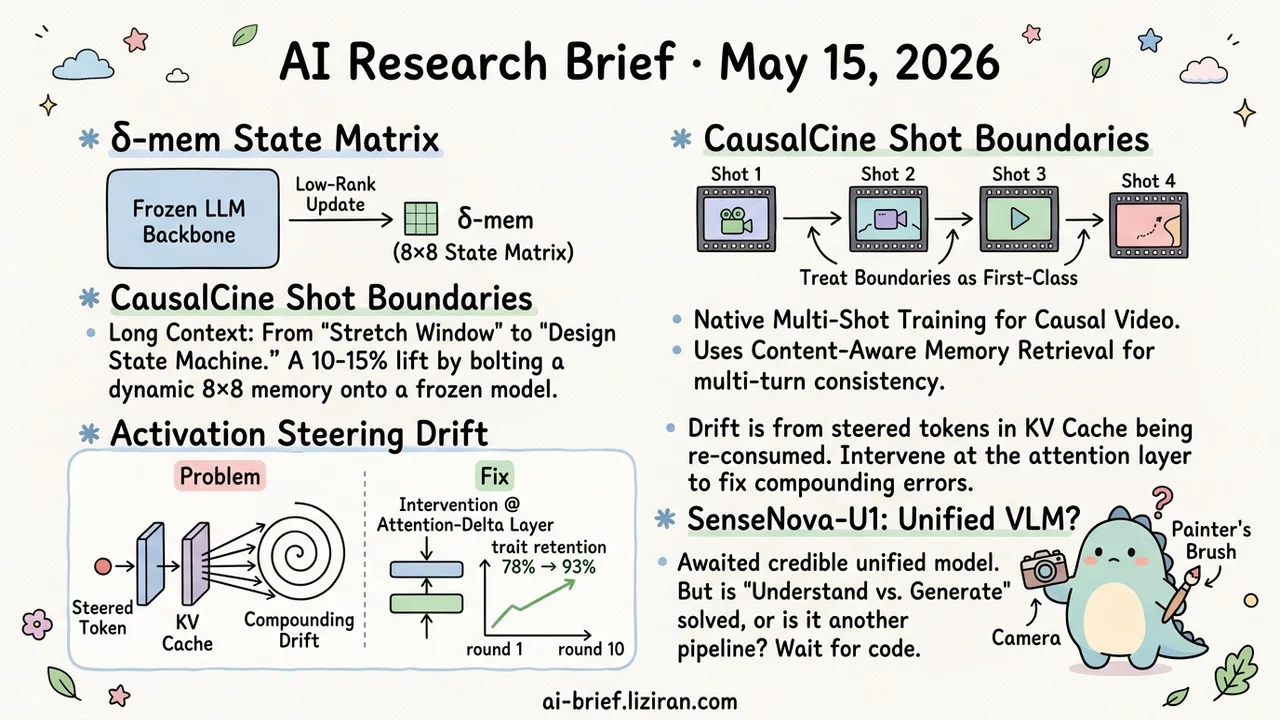

- δ-mem Bolts an 8×8 State Matrix Onto a Frozen Backbone. A delta-rule online update lifts memory-heavy tasks 10–15% over baseline. Reframes long context from "stretch the window" to "design a state machine."

- CausalCine Treats Shot Boundaries as First-Class Citizens. Native multi-shot training plus relevance-routed KV retrieval (CAMR) reaches bidirectional-model quality while keeping streaming interactivity.

- Multi-Turn Activation Steering Drift Has a Concrete Mechanism. Steered tokens land in KV cache and get re-consumed every step, compounding the drift. Move the intervention to the attention-delta layer and round-10 trait retention climbs from 78% to 93%.

- SenseNova-U1 Frames "Understand vs. Generate" as a Structural Problem. 157 upvotes show the community is waiting for a credible unified VLM, but the abstract alone can't tell you how it actually differs from Chameleon and Janus.

Featured

01 Can an 8×8 State Matrix Replace Ever-Longer Context?

The mainstream long-context recipe over the past two years has leaned hard on compute. Wider windows, fatter KV caches, more memory in exchange for more capacity. δ-mem reframes the question. Keep the frozen full-attention backbone untouched, attach a fixed 8×8 state matrix on the side, update it online with a delta rule, then inject the readout into attention as a low-rank correction.

The argument: "remember more" should mean "design a state machine," not "accumulate more tokens." Reported numbers are 10% average lift over the frozen backbone and 15% over the strongest non-δ-mem memory baseline. On memory-heavy benchmarks it reaches 31% on MemoryAgentBench and 20% on LoCoMo without obvious damage to general capability.

The frozen backbone is the practical hook. No retraining, no model swap, low integration cost — fits cleanly on top of an already-deployed model. Whether an 8×8 state can carry the long-horizon memory of a real agent is the open question, and that needs more scenarios.

Key takeaways: - Stretching the context window isn't the only path. Compact state-machine memory is a parallel track worth tracking. - Frozen backbone keeps the integration cheap. No retrain, no replacement. - Gains concentrate on memory-heavy tasks with general capability preserved. Whether the state size scales to real agent workloads is still open.

Source: δ-mem: Efficient Online Memory for Large Language Models

02 An Autoregressive Video Model That Treats Shot Cuts as First-Class

Autoregressive video generation has been stuck in the "push a single shot to its limit" frame. Long rollouts hit known problems: motion stagnation and semantic drift, because the model approximates a multi-shot sequence as one stretched single shot. CausalCine flips the framing. Train the causal base on native multi-shot sequences so it learns shot transitions explicitly. Then pair it with Content-Aware Memory Routing — CAMR retrieves historical KV by attention relevance, not temporal proximity, holding cross-shot consistency on a bounded active memory.

Distill the result into a few-step generator and you get real-time interaction. Mid-prompt edits don't force regeneration of finished shots. The paper claims quality close to bidirectional models while keeping causal generation's streaming behavior.

Key takeaways: - Teams building video narrative products should focus on shot-boundary modeling before chasing longer clips. - Relevance-based KV retrieval (CAMR-style) transfers usefully to other long-context video applications. - "Close to bidirectional" needs the full paper and demo. Specific metrics and failure modes aren't in the abstract.

Source: CausalCine: Real-Time Autoregressive Generation for Multi-Shot Video Narratives

03 Activation Steering Gets Amplified Into Drift by KV Cache

Anyone running personality or safety steering has seen this. A steering vector that works cleanly in single-turn collapses after ten turns of conversation. The standard explanation is "tune the strength." This paper offers a more specific failure mechanism: a steered token's perturbed state lands in KV cache, then every subsequent attention step re-consumes that perturbed representation. One local intervention gets cached into compounding drift.

Once you see the mechanism, the fix follows. Move the intervention from the residual stream down to the attention-delta layer and gate it per-token, so steering travels only the path the model already uses to "listen to the prompt."

On multi-turn benchmarks coherence drift recovers from -18.6 to -1.9, and round-10 trait expression climbs from 78.0 to 93.1.

Key takeaways: - When multi-turn steering fails, check what's accumulating in KV cache before reaching for strength tuning. - Residual-stream interventions couple naturally to the cache. Attention-delta is the safer injection point. - Personality and safety steering teams can borrow this failure mode as an off-the-shelf diagnostic.

Source: Prompt-Activation Duality: Improving Activation Steering via Attention-Level Interventions

04 Another "Unified Multimodal" — Is This One Different?

"Understanding and generation should be the same process" — Chameleon, Janus, Show-o have all said this over the past two years. Each release promises to dissolve the split. Implementations often turn out to be repackaged pipeline assembly. SenseNova-U1's interesting move is naming the split as a structural limitation rather than an engineering artifact. That framing matters more than which benchmark it tops.

The abstract mentions a NEO-unify architecture, two MoE variants (8B dense and 30B-A3B), and "preliminary evidence" in VLA and world-model directions. All plausible. None of it tells you whether SenseNova-U1 actually shares a representation space across understanding and generation, or where it differs from the prior work. 157 upvotes confirms the community is waiting. Hype isn't a verdict.

Key takeaways: - "Unified multimodal" has been claimed too many times. Read the ablations, not the narrative. - Whether understanding and generation share the representation space is the real test of "truly unified" versus "repackaged pipeline." - Multimodal product teams should wait for the code and detailed evals before committing to this line.

Source: SenseNova-U1: Unifying Multimodal Understanding and Generation with NEO-unify Architecture

Also Worth Noting

Today's Observation

Read δ-mem, Mela, LongMemEval-V2, and NanoResearch together and they don't add up to "memory is hot again." They mark a more specific methodological shift. The default industry path — wider context windows and fatter KV caches — is being challenged by a state-machine path. The question moves from "how many tokens can we hold" to "what do we store, how do we update it, how do we distill it." δ-mem operates at the architecture layer, hanging a fixed-size state matrix off a frozen backbone. Mela works at the test-time dynamics layer, using consolidation to stabilize transient experience. LongMemEval-V2 shifts the evaluation target from user history to environment, interfaces, and failure modes. NanoResearch binds memory, skills, and policy into co-evolution at the agent layer. Four layers, one direction: the cache is not the answer; state design is the question.

Concrete next step. If you're building long-context systems or long-running agents, audit your current KV cache growth curve and actual hit pattern. Separate "state that genuinely needs long-term retention" from "intermediate results the cache piles up indiscriminately." Push the first into a compact state structure like δ-mem. Evict the second by relevance (CausalCine's CAMR pattern) instead of recency. That mix usually buys more stable long-horizon behavior than another window expansion.