Today's Overview

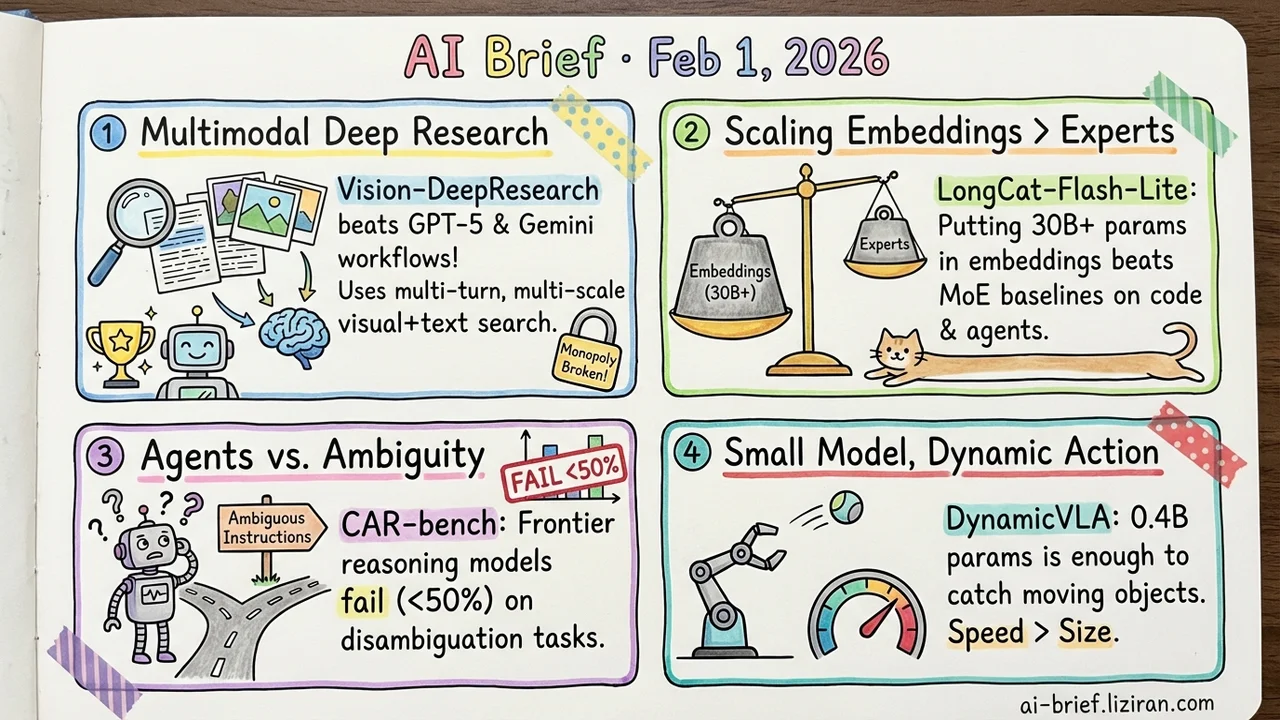

- Multimodal Deep Research Is No Longer a Closed-Source Monopoly — Vision-DeepResearch uses multi-turn, multi-entity, multi-scale visual+text search to beat workflows built on GPT-5 and Gemini-2.5-Pro.

- The MoE scaling playbook may have it backwards. Scaling embeddings beats scaling experts — LongCat-Flash-Lite puts 30B+ params in embeddings (only 3B activated) and outperforms equivalent MoE baselines on code and agent tasks.

- LLM agents handle clean instructions fine, but fall apart under ambiguity — CAR-bench shows frontier reasoning models hit below 50% consistent pass rate on disambiguation tasks.

- Dynamic manipulation doesn't need a big model. 0.4B parameters is enough — DynamicVLA overlaps reasoning and execution with temporally aligned action streaming to catch moving objects.

Featured

01 Multimodal Open-Source Deep Research Beats GPT-5

Current multimodal search frameworks assume one image plus a few text queries will get the job done. Real-world images are noisy, and one retrieval round is never enough.

Vision-DeepResearch brings the "deep research" paradigm to multimodal. The model performs dozens of reasoning steps and hundreds of search engine interactions, progressively aggregating evidence from multiple image regions and text sources. Training isn't prompt engineering — it's cold-start supervision followed by RL, internalizing deep search capability into the model weights.

The result: it outperforms workflows built on GPT-5, Gemini-2.5-Pro, and Claude-4-Sonnet. Code is coming soon.

Key takeaways: - Multimodal deep research needs multi-turn, multi-entity, multi-scale search strategies - RL training can internalize search capability into the model rather than relying on external workflows - An open-source solution beating closed-source workflows shows architecture design matters more than foundation model scale

Source: Vision-DeepResearch: Incentivizing DeepResearch Capability in Multimodal Large Language Models

02 Architecture MoE Should Scale Embeddings, Not Experts

Mixture-of-Experts is the standard route for sparse scaling, but diminishing returns and system bottlenecks keep mounting. This paper explores an orthogonal direction: spend the parameter budget on the embedding layer instead of the expert layer.

LongCat-Flash-Lite is a 68.5B-parameter model with only ~3B activated, over 30B of which go to embeddings. Sounds counterintuitive — but at equivalent activated parameters it surpasses MoE baselines and shows unexpectedly strong performance on agent and coding tasks.

Combined with speculative decoding, this sparsity translates directly into inference speedups.

Key takeaways: - Embedding scaling is a viable alternative to expert scaling for sparse LLMs - 30B+ parameters in embeddings aren't wasted — they outperform on specific tasks - Teams sensitive to inference cost should watch this "big model, small activation" design closely

Source: Scaling Embeddings Outperforms Scaling Experts in Language Models

03 Evaluation Frontier Models Can't Handle Ambiguous Instructions

Existing agent benchmarks are too clean — instructions are clear, tools are complete, information is sufficient. Real users don't say "navigate to 123 Main Street, Building A, Suite 200." They say "go to that restaurant from last time."

CAR-bench targets this reality with 58 interconnected tools and two challenging task types: disambiguation (incomplete user instructions requiring follow-up questions or internal lookups) and hallucination detection (recognizing when tools or information are missing).

The results are harsh: even frontier reasoning models hit below 50% consistent pass rate on disambiguation. They either act without asking or fabricate information when tools are unavailable.

Key takeaways: - The gap between "sometimes correct" and "reliably correct" is the core challenge for agent deployment - Models prefer fabrication over refusal when tools are missing - Agent evaluation needs to move from ideal scenarios to real-world ambiguity and incompleteness

Source: CAR-bench: Evaluating the Consistency and Limit-Awareness of LLM Agents under Real-World Uncertainty

04 Robotics A 0.4B Model Catches Flying Objects

VLA models excel at static grasping but struggle with moving targets — perception lag, no temporal anticipation, discontinuous control. DynamicVLA attacks this from three angles.

A convolutional vision encoder handles fast multimodal inference at just 0.4B parameters. Continuous Inference overlaps reasoning and execution to cut perception-action latency. Latent-aware Action Streaming temporally aligns perception with action execution. The accompanying DOM benchmark includes 200K synthetic episodes and 2K real-world episodes collected without teleoperation.

The 0.4B parameter count says it all: for embodied intelligence, speed matters more than model size.

Key takeaways: - Dynamic manipulation demands simultaneous reasoning and execution, not alternating turns - 0.4B parameters prove that speed trumps scale in embodied AI - A teleoperation-free data pipeline is critical for scaling training

Source: DynamicVLA: A Vision-Language-Action Model for Dynamic Object Manipulation

Also Worth Noting

Today's Observation

At least three papers today challenge the "bigger is better" instinct. LongCat-Flash-Lite puts parameters into embeddings instead of experts. DynamicVLA achieves dynamic manipulation with a 0.4B model. MMFineReason matches full-dataset results with just 7% of the data. The common thread: how you allocate parameters and data may matter more than absolute scale. If you're training models or tuning data mixes, it's worth asking whether your resource allocation has fallen into the "spread evenly and hope" default.