Today's Overview

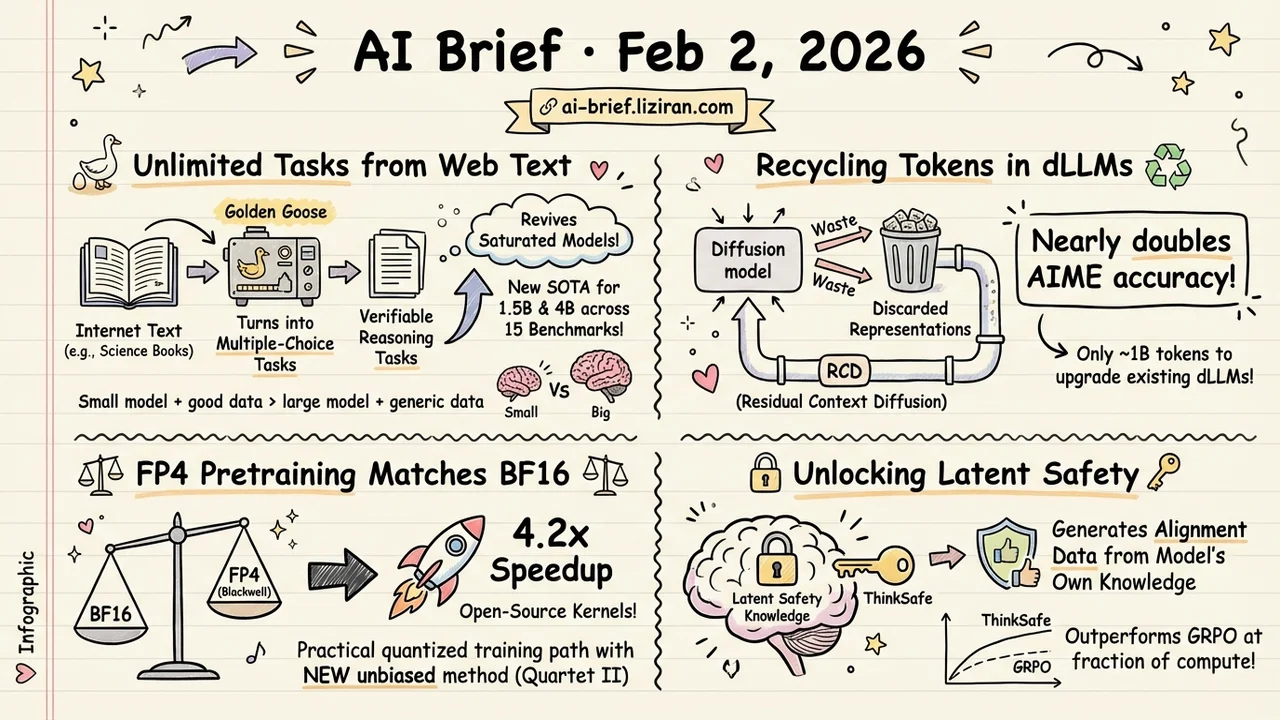

- Running out of RLVR training data? Synthesize unlimited tasks from web text. Golden Goose turns unverifiable pages into verifiable reasoning tasks, revives saturated models, and sets new SOTA for 1.5B and 4B across 15 benchmarks.

- The biggest computational waste in diffusion LLMs just got plugged — recycling discarded token representations nearly doubles AIME accuracy. Residual Context Diffusion upgrades existing dLLMs with only ~1B tokens.

- Blackwell's FP4 pretraining finally matches BF16 accuracy — Quartet II achieves 4.2x speedup with a new unbiased quantization method; kernels are open-source.

- Stronger reasoning models are less safe, but the fix doesn't need an external teacher. ThinkSafe unlocks the model's own latent safety knowledge to generate alignment data, outperforming GRPO at a fraction of the compute.

Featured

01 Training RL Data Ceiling? Make More From Web Text

RLVR (Reinforcement Learning with Verifiable Rewards) is the primary tool for teaching LLMs to reason, but it has a practical ceiling: verifiable training data is finite, and models eventually saturate.

Golden Goose proposes a deceptively simple fix — take "unverifiable" internet text (science textbooks, for instance) and reformat it as multiple-choice fill-in-the-middle tasks. An LLM identifies key reasoning steps and generates plausible distractors. This pipeline produced GooseReason-0.7M, spanning math, programming, and general science.

Models that had plateaued on existing RLVR data started climbing again, with 1.5B and 4B-Instruct models hitting new SOTA across 15 benchmarks. The cybersecurity validation is especially telling: RLVR tasks synthesized from raw FineWeb scrapes let Qwen3-4B beat a 7B model that had undergone domain-specific pretraining and post-training.

Key takeaways: - Internet text is a nearly unlimited source of reasoning tasks once you know how to make it verifiable - Model saturation on current data doesn't mean RL training has hit its ceiling — new data sources restart growth - Small model + good data beats large model + generic data

Source: Golden Goose: A Simple Trick to Synthesize Unlimited RLVR Tasks from Unverifiable Internet Text

02 Architecture Diffusion LLMs Were Throwing Away Their Best Work

The appeal of diffusion language models (dLLMs) is parallel decoding, but the best current methods have a massive waste problem: each step keeps only the highest-confidence tokens and discards everything else.

Residual Context Diffusion (RCD) shows those discarded tokens are far from useless — their representations carry rich contextual information. RCD converts them into residual signals and injects them back into the next denoising step, giving the model cross-iteration memory. Training uses a decoupled two-stage pipeline that sidesteps backpropagation memory bottlenecks, and converting an existing dLLM to RCD takes only ~1 billion tokens.

The payoff: 5-10 point accuracy gains across multiple benchmarks, near-doubled accuracy on AIME, and 4-5x fewer denoising steps to reach equivalent quality.

Key takeaways: - The "discard and redo" strategy was leaving substantial compute on the table - Retaining intermediate representations as residual signals is a low-cost improvement path - ~1B token conversion cost means existing dLLMs can upgrade quickly

Source: Residual Context Diffusion Language Models

03 Efficiency FP4 Pretraining Finally Matches BF16

NVIDIA Blackwell GPUs natively support NVFP4, theoretically enabling full 4-bit pretraining. But previous quantized training methods sacrificed precision for unbiased gradient estimation, leaving a noticeable accuracy gap versus FP16/FP8.

Quartet II closes that gap with MS-EDEN, a new unbiased quantization routine that cuts quantization error by more than 2x compared to stochastic rounding. Integrated into a fully NVFP4 scheme for linear layers, it produces consistently better gradient estimates in both forward and backward passes.

Validated on end-to-end training at 1.9B parameters and 38B tokens, with open-source Blackwell GPU kernels delivering up to 4.2x speedup over BF16.

Key takeaways: - FP4 pretraining has moved from "runs but loses accuracy" to "matches accuracy and runs 4x faster" - Blackwell GPU users now have a practical quantized training path with open-source kernels - Teams sensitive to pretraining cost should track this closely

Source: Quartet II: Accurate LLM Pre-Training in NVFP4 by Improved Unbiased Gradient Estimation

04 Safety Stronger Reasoning, Weaker Safety. Let the Model Fix Itself

Large reasoning models chase reasoning ability through RL, but the training over-optimizes for compliance — making them more willing to follow harmful requests. Existing fixes distill safety behavior from external teachers, introducing distribution shift that degrades native reasoning.

ThinkSafe's key insight: compliance suppresses safety mechanisms, but the model still retains latent knowledge of what is harmful. Lightweight refusal steering unlocks this, guiding the model to generate in-distribution safety responses as its own training data.

On DeepSeek-R1-Distill and Qwen3, ThinkSafe significantly outperforms baselines on safety while keeping reasoning intact — and costs far less than GRPO.

Key takeaways: - RL training creates real tension between reasoning capability and safety — pursuing one degrades the other - The model's safety knowledge is suppressed, not erased — refusal steering can unlock it - Self-generated alignment avoids distribution shift, striking a better balance than external distillation

Source: THINKSAFE: Self-Generated Safety Alignment for Reasoning Models

Also Worth Noting

Today's Observation

Three high-quality diffusion language model papers dropped today — RCD recycling discarded tokens, FourierSampler guiding generation in the frequency domain, and masked diffusion regularization tuning. dLLMs are moving fast from proof-of-concept to engineering viability, with practical efficiency bottlenecks being picked off one by one. Meanwhile, RLVR data expansion (Golden Goose) and FP4 pretraining (Quartet II) both point in the same direction: making it cheaper for more teams to train stronger models. If you work on reasoning model training, dLLM decoding optimization and RLVR data synthesis both belong on your tracking list.