Today's Overview

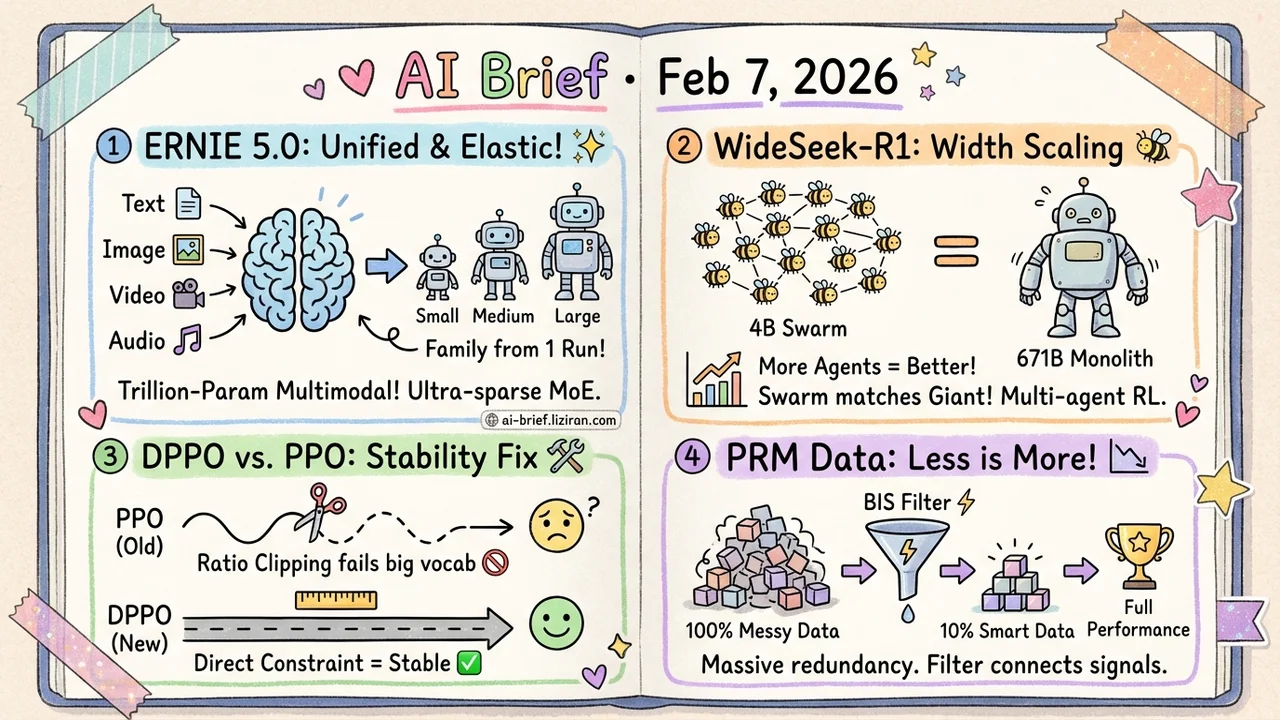

- Baidu Releases ERNIE 5.0, a Trillion-Parameter Unified Multimodal Model, training text, image, video, and audio jointly from scratch with ultra-sparse MoE and elastic training that yields a family of deployable sub-models from one run.

- Multi-agent RL enables "width scaling" where a 4B model swarm matches a 671B monolith. WideSeek-R1 improves with more parallel sub-agents, matching DeepSeek-R1 on information retrieval.

- PPO's Core Mechanism Doesn't Fit LLMs: DPPO shows ratio clipping systematically over-constrains low-probability tokens and under-constrains high-probability ones in large vocabularies; direct divergence constraints stabilize training.

- Multimodal PRM training data is massively redundant: 10% of the data is enough. BIS filtering achieves full-data performance with one-tenth the samples.

Featured

01 Multimodal One Training Run, a Family of Models

"Unified multimodal" has been a buzzword for years. But a trillion-parameter model that trains text, image, video, and audio from scratch under a single autoregressive objective — with a public technical report — is a first.

ERNIE 5.0 makes two design choices worth watching. Ultra-sparse MoE with modality-agnostic expert routing: regardless of whether the input is text or video frames, the same routing logic applies, letting the model learn which experts handle what. Then there's the elastic training paradigm: during a single pre-training run, the model simultaneously learns sub-models of varying depths, expert capacities, and sparsity levels. At deployment time, you pick the configuration that fits your hardware.

This is fundamentally an engineering answer to: you've trained a trillion-parameter model, now how do teams with different compute budgets actually use it? The report includes detailed expert routing visualizations and elastic training analysis.

Key takeaways: - Modality-agnostic routing avoids the complexity of separate pathways per modality — the key design decision for unified multimodal - Elastic training turns "one model" into "a model family," lowering the deployment barrier for large models - First public technical report of its kind at trillion-parameter scale

Source: ERNIE 5.0 Technical Report

02 Agent Model Too Small? Send More of Them

Deep learning's dominant narrative has been depth scaling — making a single model deeper and stronger. But when a task is inherently "wide" — say, gathering information across multiple dimensions — the bottleneck isn't individual capability. It's organizational efficiency.

WideSeek-R1 proposes a complementary axis: width scaling. A lead agent decomposes the task, multiple sub-agents execute information retrieval in parallel, and the entire system is jointly optimized through multi-agent reinforcement learning (MARL). The result: WideSeek-R1-4B hits 40.0% item F1 on the WideSearch benchmark, matching standalone DeepSeek-R1-671B — a 167x parameter gap.

Performance keeps improving as you add more parallel sub-agents, suggesting width scaling has its own scaling law.

Key takeaways: - Width scaling naturally complements depth scaling, especially for inherently parallelizable tasks like information retrieval - 4B x N matching 671B x 1 validates "small model swarms" as a viable cost-reduction path - MARL joint training is the critical ingredient — not just stitching agents together, but training them to collaborate

03 Training PPO's Trust Region Is Wrong for LLMs

PPO is the de facto standard for LLM reinforcement learning, but this paper identifies a structural flaw: ratio clipping is pathological in large-vocabulary settings. The probability ratio is a per-token sample estimate, not a true policy divergence measure. Low-probability tokens have wildly volatile ratios and get over-clipped — they can't learn. High-probability tokens barely move the ratio and evade clipping entirely, risking catastrophic drift.

DPPO takes the direct approach: drop the heuristic clipping and estimate actual policy divergence (Total Variation or KL). Binary and Top-K approximations keep memory overhead manageable. Experiments show improved stability and efficiency over existing methods.

If you're running RLHF or GRPO pipelines, this is a PPO alternative worth investigating.

Key takeaways: - Ratio clipping under large vocabularies systematically misallocates constraint strength — this explains common instabilities in LLM RL training - DPPO's divergence constraint is theoretically cleaner and remains engineering-feasible through approximation - A credible alternative to PPO for RLHF and GRPO pipelines

Source: Rethinking the Trust Region in LLM Reinforcement Learning

04 Training 90% of Your PRM Data Is Wasted

Training multimodal process reward models (PRMs) typically requires large-scale Monte Carlo annotation — expensive to produce. But this paper delivers good news: most of that data is redundant. Random subsampling saturates quickly, meaning the useful information is concentrated in a small fraction of samples.

What makes a data point informative? Two factors: the mix ratio of positive and negative labels, and label reliability (average MC score of positive samples). Based on these signals, the authors propose BIS (Balanced-Information Score) filtering.

Validated on InternVL2.5-8B and Qwen2.5-VL-7B backbones, BIS matches or exceeds full-data performance using just 10% of the training set.

Key takeaways: - Smart filtering beats brute-force data scaling for PRM training - BIS requires no extra annotation — it works directly from existing MC signals - For teams training visual reasoning PRMs, this means an order-of-magnitude reduction in training cost

Source: Training Data Efficiency in Multimodal Process Reward Models

Also Worth Noting

Today's Observation

Two signals stand out today. First, the unified multimodal race enters the trillion-parameter era: ERNIE 5.0 is the first publicly reported trillion-parameter unified autoregressive multimodal model, while OmniSIFT tackles the inference cost side of the same problem — training and serving are advancing in parallel. Second, "small model swarms" are emerging alongside "big model solo": WideSeek-R1's width scaling, pi-Distill's privileged-information distillation, and heterogeneous small-model stacks all demonstrate that you don't always need the largest model. Teams working on multimodal deployment should look at OmniSIFT's token compression; teams building agent systems should study WideSeek-R1's MARL training framework.