Today's Overview

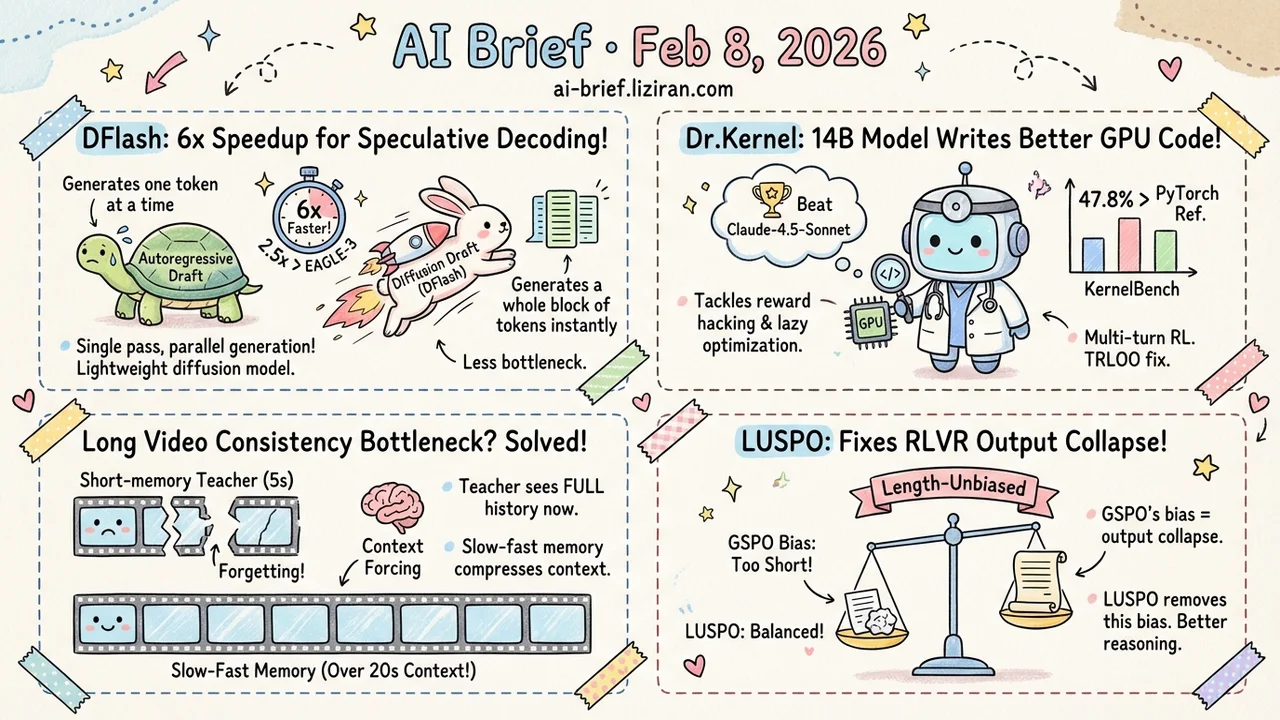

- Diffusion Models Break Speculative Decoding's Bottleneck, 6x Speedup, DFlash uses a lightweight block diffusion model to generate all draft tokens in a single forward pass, 2.5x faster than EAGLE-3.

- Multi-turn RL for Triton code generation: 14B model writes GPU kernels better than Claude-4.5-Sonnet. Dr.Kernel tackles reward hacking and lazy optimization, with 47.8% of kernels outperforming the PyTorch reference.

- Long Video Consistency Bottleneck Identified: Context Forcing traces the problem to a structural mismatch where a short-memory teacher supervises a long-memory student; slow-fast memory pushes effective context past 20 seconds.

- Models getting terse during RLVR training? The root cause isn't model behavior but algorithm bias. LUSPO fixes GSPO's length bias, preventing output collapse.

Featured

01 Efficiency The Draft Model Doesn't Need to Be Autoregressive

Speculative decoding's core idea is "small model drafts, big model verifies." But there's an awkward bottleneck: the draft model itself is autoregressive, still generating one token at a time.

DFlash takes the obvious fix — use a diffusion model for drafting. A lightweight block diffusion model generates all draft tokens in parallel via a single forward pass, then the target LLM verifies them in parallel. The key trick: the draft model conditions directly on context features already computed by the target model, improving draft quality and acceptance rates.

The result is 6x lossless acceleration, 2.5x faster than the current state-of-the-art EAGLE-3.

Key takeaways: - Replacing autoregressive drafting with parallel diffusion removes the fundamental sequential bottleneck in speculative decoding - Single-pass draft generation dramatically improves GPU utilization - 6x acceleration with zero quality loss has direct implications for inference serving costs

Source: DFlash: Block Diffusion for Flash Speculative Decoding

02 Code Intelligence 14B Model Beats Claude at GPU Kernels

Using LLMs to generate high-performance GPU kernels (Triton code) sounds great in theory. In practice, training hits two problems: reward hacking — the model finds shortcuts to score high while producing no real speedup — and lazy optimization — the model ensures correctness without pursuing actual acceleration.

Dr.Kernel builds a full infrastructure stack to address both. KernelGYM provides a distributed GPU environment supporting multi-turn interaction with reward hacking detection. Discovering a self-inclusion bias in GRPO under multi-turn settings, they propose TRLOO for unbiased advantage estimation. Profiling-based rewards force the model to chase real speedups, not surface-level correctness.

The result: Dr.Kernel-14B matches Claude-4.5-Sonnet on KernelBench, with 47.8% of kernels achieving 1.2x+ speedup in multi-turn evaluation — versus GPT-5's 28.6%.

Key takeaways: - The hard part of kernel generation isn't model capability — it's training environment and reward design - GRPO has a self-inclusion bias in multi-turn RL; TRLOO is a fix worth tracking - A 14B open-source model beating closed-source frontier models shows the payoff of domain-specific RL

Source: Dr. Kernel: Reinforcement Learning Done Right for Triton Kernel Generations

03 Video Gen Long Videos Break Because the Teacher Has No Memory

Real-time long video generation is one of the hottest directions right now, but existing methods all struggle with consistency — beyond 30 seconds, the model starts "forgetting" earlier content. Context Forcing identifies the structural root cause: mainstream streaming tuning frameworks train a long-context student under supervision from a short-context teacher limited to 5-second windows. If the teacher can't see the full history, it can't teach global coherence.

The fix is intuitive: let the teacher see the entire generation history too. To make this computationally feasible at 2-minute durations, they introduce a Slow-Fast Memory architecture that compresses the linearly growing visual context into two-rate memory streams, drastically reducing redundancy.

Effective context jumps from the 2-10 seconds of existing methods to over 20 seconds, outperforming LongLive and Infinite-RoPE across consistency metrics.

Key takeaways: - The consistency problem lives in the supervision signal, not the student model - Slow-fast memory is a practical answer to linear context growth in video generation - Teams working on video generation should take note of this teacher-student paradigm fix

Source: Context Forcing: Consistent Autoregressive Video Generation with Long Context

04 Training Your Model Isn't Learning Brevity — the Algorithm Is Biased

When training with verifiable rewards (RLVR), a common observation is dramatic shifts in output length. Some algorithms make models verbose. Others drive them toward extreme brevity until "output collapse." Is the model learning efficient reasoning, or is this a side effect?

LUSPO provides a theoretical decomposition of the factors influencing output length across mainstream RLVR algorithms. The finding: GSPO's loss function has a systematic length bias — long and short responses contribute unequally to the gradient, making length uncontrollable during training.

The fix: make the sequence-level policy optimization loss length-unbiased, mathematically eliminating the bias. LUSPO consistently outperforms GRPO and GSPO on both mathematical and multimodal reasoning tasks.

Key takeaways: - Length variation during RLVR isn't all model behavior — a significant portion is algorithmic bias - GSPO has a systematic length bias that can cause output collapse - If you're hitting abnormal length patterns in RL training, check the algorithm's own bias before tuning the reward

Also Worth Noting

Today's Observation

The clearest signal today: RL training engineering details are becoming the performance bottleneck. LUSPO exposes GSPO's length bias, Dr.Kernel corrects GRPO's multi-turn self-inclusion bias, EBPO patches GRPO's baseline variance problem, and DPPO (from yesterday) revisits PPO's ratio clipping. Four papers from different angles all point to the same conclusion: standard RL algorithms don't transfer cleanly to LLMs. Teams running RL training should systematically audit their pipelines for bias sources, particularly around length bias and advantage estimation.