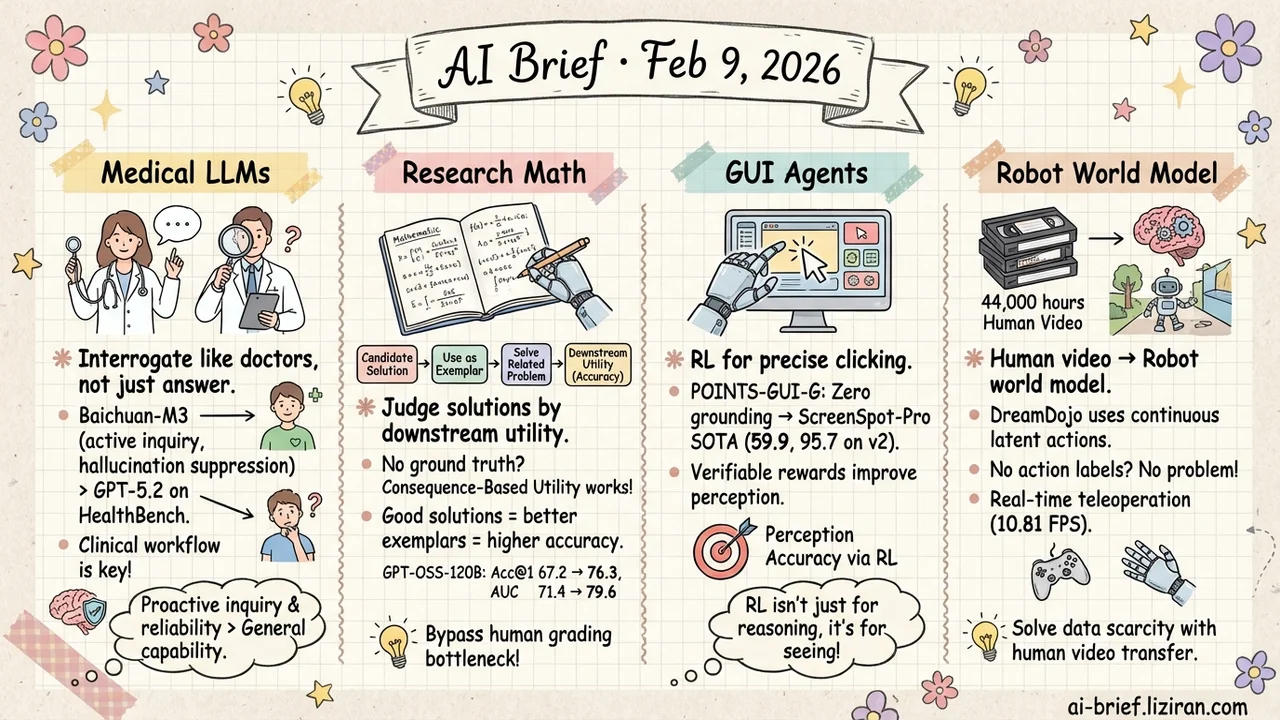

Today's Overview

- Medical LLMs shouldn't just answer questions — they should interrogate like doctors. Baichuan-M3 beats GPT-5.2 across HealthBench by training proactive inquiry and hallucination suppression into the clinical workflow.

- No ground truth for research-level math? Judge solutions by their downstream utility. Consequence-Based Utility uses candidate solutions as few-shot exemplars to solve related problems — good solutions naturally yield higher accuracy.

- GUI agents can learn precise clicking through pure RL. POINTS-GUI-G goes from near-zero grounding to ScreenSpot-Pro SOTA, proving verifiable rewards work for perception, not just reasoning.

- 44,000 hours of human video become a robot world model. DreamDojo uses continuous latent actions to sidestep action-label scarcity, distills to real-time 10.81 FPS for teleoperation and planning.

Featured

01 Safety Medical LLMs Should Ask, Not Just Answer

Existing medical LLMs have a fundamental problem: they're passive answer machines. Ask a question, get an answer. But that's not how clinical decision-making works — real doctors probe, follow up, rule things out, and refuse to conclude on incomplete information.

Baichuan-M3 trains this workflow directly: proactive information acquisition to resolve ambiguity, long-horizon reasoning to integrate scattered evidence, and a dedicated hallucination suppression mechanism for factual reliability. On HealthBench, it significantly outperforms GPT-5.2 across clinical inquiry, advisory, and safety dimensions.

The model is open-source — a testable baseline for any team building medical AI products.

Key takeaways: - The differentiator in medical AI isn't general capability but clinical workflow behaviors: proactive inquiry and hallucination suppression - Beats GPT-5.2 on HealthBench, open-source and available - Teams building medical AI should treat "passive Q&A → active clinical reasoning" as a product direction

Source: Baichuan-M3: Modeling Clinical Inquiry for Reliable Medical Decision-Making

02 Evaluation No Ground Truth? Judge Math Solutions by Their Consequences

Reasoning models keep getting stronger, but verifying their output on frontier math problems remains brutal — these problems often lack agreed-upon answers, and human expert review doesn't scale.

Consequence-Based Utility offers an elegant workaround: if a solution is correct, the methodological insights it contains should help solve related, verifiable problems. The approach feeds candidate solutions as in-context exemplars to a model solving related tasks. Good solutions naturally produce higher downstream accuracy.

On GPT-OSS-120B, this pushes Acc@1 from 67.2 to 76.3 and AUC from 71.4 to 79.6, consistently beating reward models and LLM-as-judge approaches.

Key takeaways: - "Good solutions should transfer" is a practical verification principle that bypasses the human-grading bottleneck - Consistent advantage over reward models and LLM judges on ranking quality - Teams training math reasoning models can use this for better data filtering pipelines

03 Agent RL Works for Perception Too, Not Just Reasoning

For GUI agents to complete real-world tasks, step one is seeing accurately — precisely locating buttons, text fields, and icons on screen. Most work fine-tunes models that already have strong spatial awareness (like Qwen3-VL). POINTS-GUI-G goes the other direction: starting from a base model with almost no grounding ability (POINTS-1.5) and building the full pipeline from scratch.

Three pillars: unified multi-source open datasets with difficulty grading, continuous vision encoder fine-tuning for perceptual precision, and RL with verifiable rewards for the final accuracy push. The result: 59.9 on ScreenSpot-Pro (SOTA) and 95.7 on ScreenSpot-v2.

The notable insight: RL here isn't enhancing reasoning — it's improving perception accuracy. GUI grounding is a natural fit for RL because rewards are trivially verifiable.

Key takeaways: - Verifiable-reward RL delivers significant gains on perception tasks, not just reasoning - Building the full pipeline from a weak base model shows data engineering and training strategy matter as much as the foundation - Teams building GUI agents should look at RL for grounding precision

Source: POINTS-GUI-G: GUI-Grounding Journey

04 Robotics 44,000 Hours of Human Video, One Robot World Model

Training a robot world model — "given an action, predict what happens next" — is bottlenecked by data. Robot-collected, action-labeled data is scarce and narrow. DreamDojo learns from human video instead.

44,000 hours of egocentric video covering everyday scenarios and fine-grained manipulation, but with no action labels. The core trick: continuous latent actions as a unified proxy action representation, enabling interaction knowledge transfer from unlabeled human video to the robot domain. After post-training on small-scale target robot data, the model demonstrates physics understanding and precise action controllability. Distilled to real-time 10.81 FPS, it supports teleoperation, policy evaluation, and model-based planning.

Key takeaways: - Large-scale human video pretraining plus small-scale robot post-training is a viable path around robot data scarcity - Continuous latent actions elegantly sidestep the missing action-label problem - Teams in embodied AI should track this "human video → robot" knowledge transfer paradigm

Source: DreamDojo: A Generalist Robot World Model from Large-Scale Human Videos

Also Worth Noting

Today's Observation

Two threads worth tracking today. First, RL with verifiable rewards is expanding from reasoning into perception: POINTS-GUI-G uses RL for GUI grounding precision, VowelPrompt uses GRPO for emotion recognition, adding to the ongoing momentum in code generation and math reasoning. Verifiable-reward RL is becoming a general capability-enhancement paradigm, no longer confined to logical reasoning tasks. Second, GRPO's various flaws are getting patched at a rapid clip — F-GRPO fixes rare-solution forgetting, yesterday's LUSPO fixed length bias, EBPO fixed baseline variance. Teams running RL training should actively track these corrections; they often matter more for training stability than scaling up the model.