Today's Overview

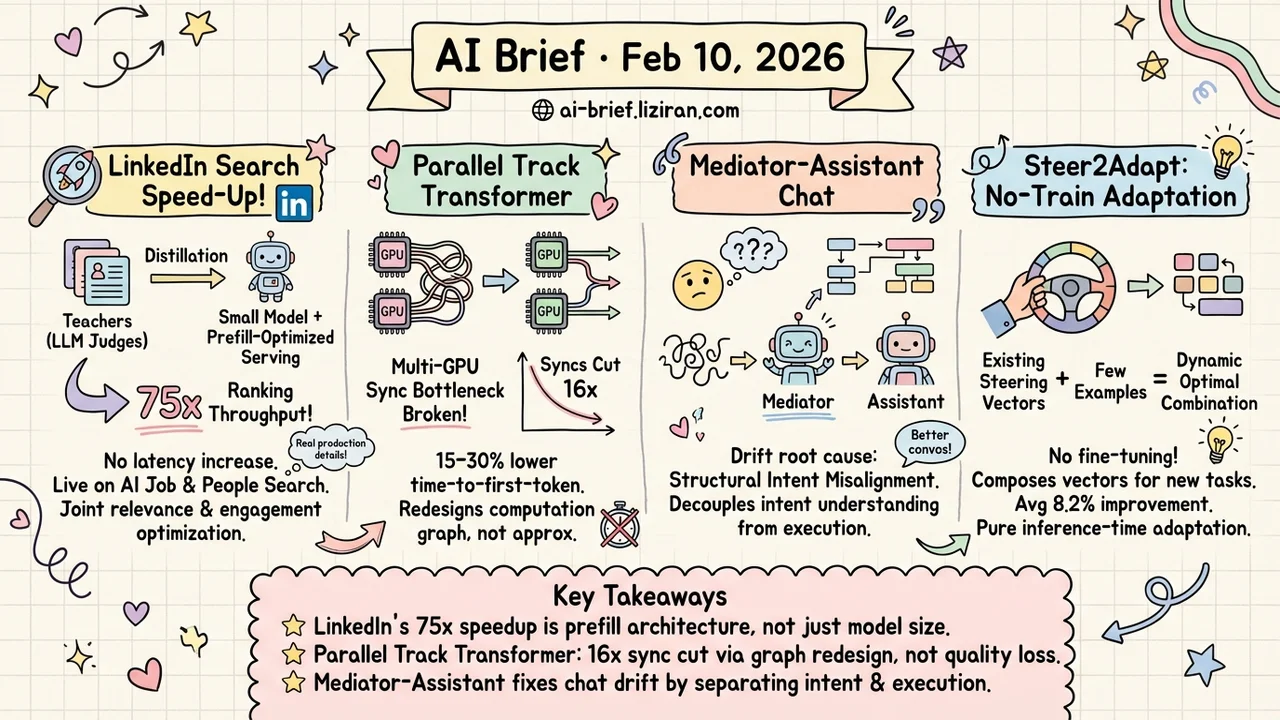

- LinkedIn got LLM search ranking into production. Multi-teacher distillation into a small model plus prefill-optimized serving yields 75x ranking throughput with no latency increase — one of the most detailed public accounts of LLM search deployment.

- Multi-GPU inference is bottlenecked by synchronization. Parallel Track Transformer cuts cross-device syncs by 16x, delivering 15–30% lower time-to-first-token on vLLM and TensorRT-LLM.

- Multi-turn conversations drift off track — the root cause is structural intent misalignment, not model weakness. A Mediator-Assistant architecture decouples intent understanding from task execution.

- No fine-tuning, no training — just composing existing steering vectors adapts LLMs to new tasks. Steer2Adapt dynamically discovers optimal combinations from a few examples, averaging 8.2% improvement.

Featured

01 Retrieval LinkedIn Ships LLM Search Ranking to Production

Using LLMs for search ranking delivers better relevance, but inference latency makes it undeployable — every team trying to put LLMs into search systems hits this wall. LinkedIn's solution is two-stage: use LLM relevance judges as teachers, then distill into a lightweight ranking system combining embedding retrieval with a small language model, jointly optimizing for relevance and user engagement.

On the serving side, they redesigned the architecture around the prefill phase, combined with model pruning and context compression, achieving 75x ranking throughput under the same latency constraints. The system is live on AI Job Search and AI People Search.

This is one of the most complete public accounts of LLM search deployment — from distillation strategy to serving architecture, with reusable engineering details throughout. If you're building search or recommendation systems, read this closely.

Key takeaways: - Multi-teacher distillation jointly optimizes relevance and engagement, not a single objective - The 75x speedup comes from prefill-oriented serving architecture, not model compression alone - Already validated in production on two core LinkedIn search products

Source: Semantic Search At LinkedIn

02 Efficiency Parallel Track Transformer Cuts GPU Syncs by 16x

Tensor parallelism is standard for multi-GPU inference, but it has a pain point: every matrix multiply requires a cross-GPU all-reduce sync. More GPUs, more communication overhead. Parallel Track Transformer redesigns how the computation graph is partitioned, letting each device's compute tracks run as independently as possible and reducing sync operations to 1/16th of the original count.

This isn't an approximation — model quality stays competitive in experiments. Integrated into TensorRT-LLM and vLLM, real benchmarks show 15–30% lower time-to-first-token, 2–12% lower per-token generation time, and up to 31.9% higher throughput.

An architecture-level optimization worth watching for any team running multi-GPU serving.

Key takeaways: - The 16x sync reduction comes from computation graph restructuring, not approximation or precision loss - Benchmarked on two major serving frameworks with real numbers - Teams sensitive to multi-GPU deployment costs should track this direction

Source: Parallel Track Transformers: Enabling Fast GPU Inference with Reduced Synchronization

03 Agent Multi-Turn Drift Is an Architecture Problem, Not a Model Problem

Anyone who's used ChatGPT has experienced this: after a few follow-ups, the model starts drifting — responses diverge further from what you actually want. Prior work called this "Lost in Conversation" and blamed model capacity. This paper offers a different diagnosis: the root cause is structural intent misalignment, not insufficient model capability.

The authors argue theoretically that scaling up models or improving training won't fix this, because conversational context is inherently structurally ambiguous. Their Mediator-Assistant architecture separates "understanding what the user actually wants" from "executing the task" — the Mediator translates ambiguous user input into explicit structured instructions based on interaction history, then hands off to the Assistant.

Directly relevant for teams building chat products or agent systems.

Key takeaways: - Multi-turn performance degradation is rooted in interaction-level intent ambiguity, not model weakness - Decoupling intent understanding from task execution is a deployable architectural pattern - Worth tracking for anyone building conversational systems or agent orchestration

Source: Intent Mismatch Causes LLMs to Get Lost in Multi-Turn Conversation

04 Training Steer2Adapt: Compose Steering Vectors, Skip Fine-Tuning

Activation steering — intervening on a model's internal activation directions — is a lightweight way to control LLMs, but existing methods use one static direction per task and fall short on complex ones. Steer2Adapt takes a different approach: many tasks share a few underlying conceptual dimensions (reasoning ability, safety, etc.), so instead of learning new steering vectors from scratch, maintain a reusable low-dimensional semantic prior subspace and dynamically discover the best linear combination for each new task using a handful of examples.

Across 9 tasks and 3 models, it delivers an average 8.2% improvement with strong data efficiency and stability. This is pure inference-time adaptation — no weight changes — suited for applications that need rapid task switching without per-scenario fine-tuning.

Key takeaways: - Upgrades steering vectors from "one per task" to "shared subspace + dynamic composition" — far more flexible - Pure inference-time adaptation with just a few examples, no training required - Worth trying for deployment scenarios that require fast multi-task switching

Source: Steer2Adapt: Dynamically Composing Steering Vectors Elicits Efficient Adaptation of LLMs

Also Worth Noting

Today's Observation

No high-scoring highlight papers today, but two industrial papers from LinkedIn (semantic search and user representation) signal a trend: big tech is aggressively validating the "LLM judge → distill → ship small model" pipeline for search and recommendation. If you're upgrading search or recommendation systems with LLMs, LinkedIn's full-stack approach — from distillation to serving optimization — is the most detailed public reference available right now.