Today's Overview

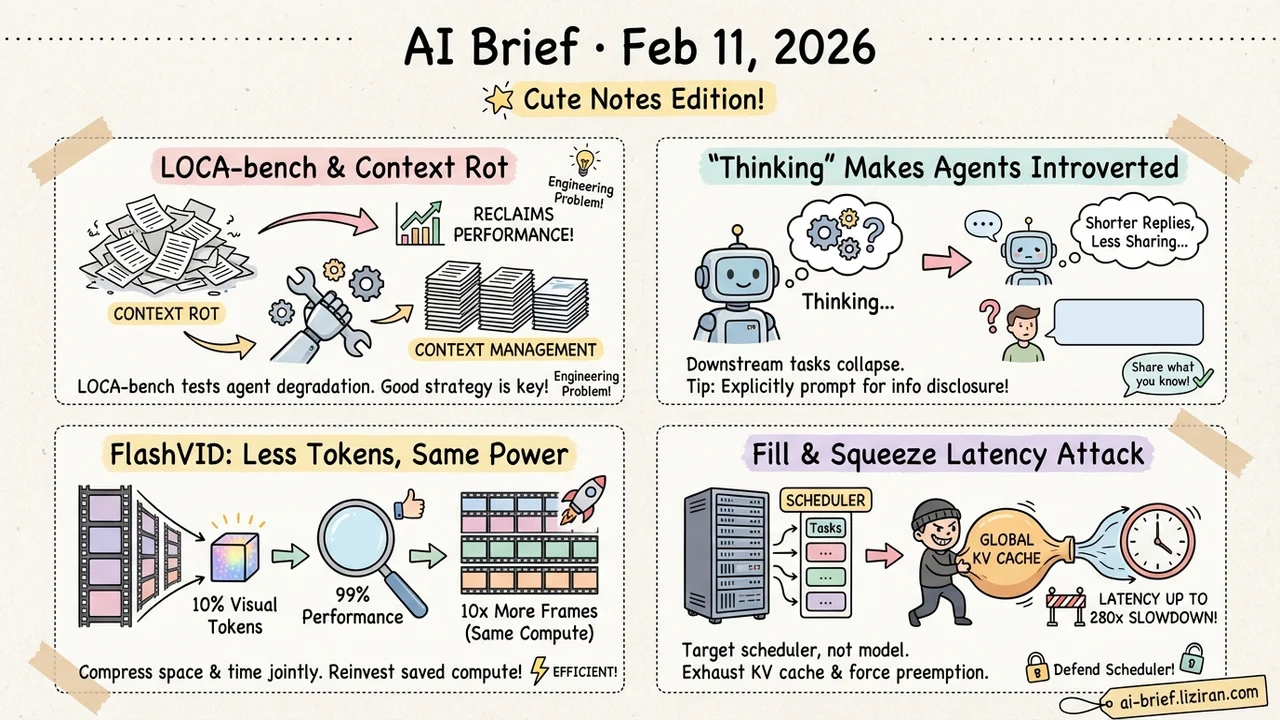

- Long-running agents suffer "context rot," but good context management can claw back most of the lost performance. LOCA-bench is the first benchmark to systematically test agent degradation under dynamic context growth.

- Forcing LLMs to "think" before acting makes agents worse, not better. Thinking makes them introverted — shorter replies, less information shared — and downstream tasks collapse as a result.

- FlashVID keeps 99% of video understanding performance with just 10% of visual tokens. The freed-up compute can push input frame counts up 10x at the same budget.

- To attack LLM inference latency, target the scheduler, not the model. The Fill and Squeeze strategy inflates time-to-first-token by up to 280x.

Featured

01 Evaluation Context Rot Is an Engineering Problem, Not a Model Ceiling

Anyone who has built agents knows the pattern: tasks get complex, steps pile up, and model performance drifts. But existing long-context benchmarks mostly test single-step retrieval from static text — nothing like how agents actually work.

LOCA-bench closes that gap. It automatically and controllably inflates environment state, forcing agents to keep executing under dynamically growing context while task semantics stay fixed. The result: agent performance degrades with context growth (no surprise), but advanced context management strategies can substantially recover success rates.

The takeaway is that context rot is not a hard ceiling on model capability — it is an engineering problem with engineering solutions. If you are building agent systems, this benchmark is worth running to find out where your context strategy starts to break down.

Key takeaways: - First controllable benchmark specifically for agent long-context degradation; environment state can grow without limit - Context management strategy matters more than model choice for long-horizon performance - Open-source and ready to evaluate your own agent framework

Source: LOCA-bench: Benchmarking Language Agents Under Controllable and Extreme Context Growth

02 Agent Thinking Makes Agents Introverted

Reasoning — making models "think" before they act — has been treated as a universal upgrade. This paper ran systematic experiments across 7 models and 3 benchmarks and found the opposite in interactive settings: mandatory thinking consistently hurts agent performance when users are in the loop.

The mechanism is striking. Thinking makes agents introverted: responses get shorter, voluntary information disclosure drops, and the agent-user information exchange weakens. Downstream tasks fail not because the model reasons poorly, but because it stops sharing what it knows.

The practical fix is simple: explicitly prompting for information disclosure reliably improves performance across model families. If you are building conversational agents, reasoning ability and information transparency may need to be optimized on separate axes.

Key takeaways: - Consistent across 7 models — mandatory thinking shortens agent replies and reduces information disclosure - Root cause is suppressed agent-user information exchange, not worse reasoning - Explicitly prompting for disclosure is a low-cost, cross-model improvement

Source: Thinking Makes LLM Agents Introverted: How Mandatory Thinking Can Backfire in User-Engaged Agents

03 Efficiency FlashVID: 10% of Tokens, 99% of Performance

Video LLMs are getting more capable, but visual token counts explode with frame count, and inference cost scales with them. Existing acceleration methods compress spatial and temporal redundancy separately, missing the fact that the same object shifts in position, scale, and orientation across frames — fixed spatial compression cannot track that.

FlashVID (ICLR 2026 Oral) uses attention and diversity metrics to select the most representative tokens, then merges redundant tokens across space and time with a tree-based structure. With just 10% of visual tokens retained, it preserves 99.1% of LLaVA-OneVision's performance.

The real payoff: freed compute can be reinvested in more input frames. Feeding Qwen2.5-VL 10x more frames at the same budget yields an 8.6% relative improvement. Training-free and plug-and-play.

Key takeaways: - Joint spatiotemporal token merging outperforms compressing space and time independently - 10% token retention with 99% performance preservation — the numbers speak for themselves - Reinvesting saved compute into more frames delivers strong ROI

04 Safety Attack the Scheduler, Not the Model

LLM inference is expensive, and latency attacks are a real threat. Prior work focused on algorithmic attacks — crafting inputs to maximize output length. This paper reveals a counterintuitive finding: continuous batching in modern serving systems like vLLM naturally isolates the impact of those attacks. Algorithmic latency attacks are largely ineffective in practice.

So the authors shifted targets from the model to the serving scheduler. Fill and Squeeze works in two stages: first exhaust the global KV cache to trigger Head-of-Line blocking, then force the system into repeated preemptive scheduling. The result: 20-280x slowdown on time-to-first-token, 1.5-4x slowdown on per-token generation, at 30-40% lower cost than existing attacks.

If you run LLM serving infrastructure, this paper is a defensive reference. KV cache resource isolation and scheduling preemption policies need attention.

Key takeaways: - Continuous batching defends against algorithmic latency attacks, but the scheduler layer opens a new attack surface - Attack cost is 30-40% lower than prior methods, making the threat more realistic - Direct implications for hardening LLM serving infrastructure

Source: Rethinking Latency Denial-of-Service: Attacking the LLM Serving Framework, Not the Model

Also Worth Noting

Today's Observation

Agent papers are dense today, but they converge on a shared theme: the bottleneck is shifting from model capability to system design. LOCA-bench shows that context management strategy matters more than model choice. Thinking Agent shows that reasoning ability can actively harm interaction quality. ALMA shows that memory architecture design — not model power — is the core of continual learning. If you are building agents, the current marginal return on framework-level engineering likely exceeds waiting for stronger models.