Today's Overview

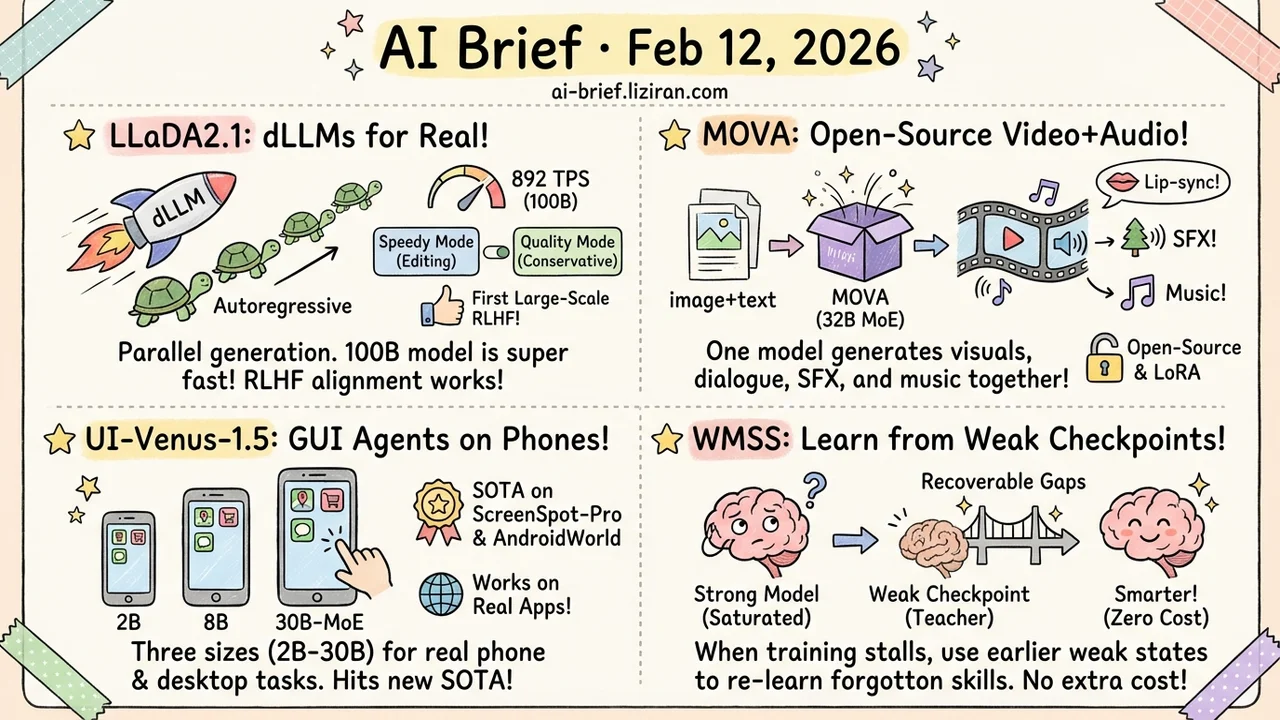

- Text diffusion models are no longer a proof of concept. LLaDA2.1's 100B model hits 892 TPS on code tasks and is the first dLLM to undergo large-scale RL training.

- Open-source video+audio joint generation is here. MOVA generates visuals, dialogue, sound effects, and music in a single model.

- GUI agents that actually work on real phones. UI-Venus-1.5 sets new SOTA on ScreenSpot-Pro and AndroidWorld across three model sizes from 2B to 30B.

- When post-training saturates, teach from your own weak checkpoints. WMSS uses earlier model states to recover forgotten capabilities at zero inference cost.

Featured

01 Architecture Text Diffusion Finally Hits Practical Speed

Autoregressive generation — one token at a time — dominates LLMs but is inherently serial. Text diffusion models (dLLMs) can theoretically generate in parallel, but previous attempts fell short on both speed and quality.

LLaDA2.1 changes this with two key moves. First, it combines Token-to-Token editing with the standard Mask-to-Token scheme via an adjustable threshold. "Speedy Mode" aggressively lowers the threshold and relies on editing passes to clean up; "Quality Mode" stays conservative for benchmark-grade output. Second, it introduces the first large-scale RL framework for dLLMs, using specialized gradient estimation to make RLHF-style alignment work with diffusion dynamics.

The 100B model hits 892 TPS on HumanEval+ — far beyond autoregressive models of comparable size. dLLMs just moved from "interesting research direction" to "worth seriously evaluating."

Key takeaways: - Dual-mode decoding with switchable speed/quality is the key design for practical deployment - First RL framework for dLLMs closes the alignment gap - 892 TPS on coding tasks at 100B scale — the number is the argument

Source: LLaDA2.1: Speeding Up Text Diffusion via Token Editing

02 Multimodal No More "Generate Video, Then Dub"

The standard workflow for AI video with audio is cascaded: generate video first, then run a separate model for audio. This doubles cost, accumulates errors, and produces misaligned timing. Veo 3 and Sora 2 proved joint generation works, but both are closed-source.

MOVA is the first open-source model for joint video-audio generation. Built on an MoE architecture with 32B total parameters (18B active), it handles lip-synced speech, environment-matched sound effects, and content-aligned background music from image+text input. Weights, code, LoRA fine-tuning support, and prompt enhancement tools are all released.

For teams building video generation into products, the "visuals + audio in one pass" capability no longer requires stitching your own pipeline.

Key takeaways: - First open-source joint video-audio generation model with MoE architecture at 32B parameters - Covers lip sync, environmental sound effects, and background music in a single forward pass - Fully open-source with LoRA fine-tuning support for direct integration

Source: MOVA: Towards Scalable and Synchronized Video-Audio Generation

03 Agent GUI Agents Need More Than a Big Model

GUI agents — AI that operates phone and desktop interfaces — are stuck between capability and deployability. Large models handle complex tasks but are too heavy; lightweight ones fail on multi-step navigation.

UI-Venus-1.5 ships three variants: 2B, 8B, and 30B-A3B (MoE), covering edge to cloud. Three technical upgrades drive the results: a 10B-token mid-training stage that teaches GUI semantics, online RL with full-trajectory rollouts (not single-step scoring) for long-horizon navigation, and model merging that fuses grounding, web, and mobile specialists into a single checkpoint. It hits 69.6% on ScreenSpot-Pro and 77.6% on AndroidWorld — both new SOTA.

The practical detail that stands out: it actually works on Chinese mobile apps in real-world testing, not just on benchmarks. That is rare for GUI agent papers.

Key takeaways: - Three model sizes cover edge deployment to cloud serving - Online RL with full-trajectory training is the key breakthrough for long-horizon GUI navigation - Tested and working on real mobile apps, not just benchmark numbers

Source: UI-Venus-1.5 Technical Report

04 Training Your Model Forgot Things — Its Weak Self Remembers

Post-training eventually hits a saturation wall: the model is already highly confident, and further training yields diminishing returns. Existing methods keep reinforcing target answers, but WMSS takes a different approach — it uses the model's own earlier, weaker checkpoints as a teacher.

The method identifies "recoverable learning gaps" through entropy dynamics: places where the weak checkpoint still performs well but the strong model has regressed. Compensatory learning then patches these gaps. It improves both math reasoning and code generation, and critically, adds zero inference cost — the change is purely in the training pipeline.

For teams deep in post-training optimization, this reframes the problem: instead of always chasing better data and stronger rewards, look at what your model has already forgotten.

Key takeaways: - Saturation bottleneck is a real pain point — existing methods produce diminishing returns - Weak checkpoints contain signal the strong model has lost - Zero inference overhead; deployment is unchanged

Source: Weak-Driven Learning: How Weak Agents make Strong Agents Stronger

Also Worth Noting

Today's Observation

A clear trend runs through today's papers: RL is spreading into every generative AI subdomain beyond LLMs. LLaDA2.1 brings RL to text diffusion models. WorldCompass applies RL to video world models. UI-Venus-1.5 trains GUI agents with online RL. iGRPO and Dr. SCI push the frontier of reasoning RL. If you build generative AI products — regardless of modality — RL post-training is becoming table stakes. Time to put GRPO/PPO engineering experience on the technical roadmap.