Today's Overview

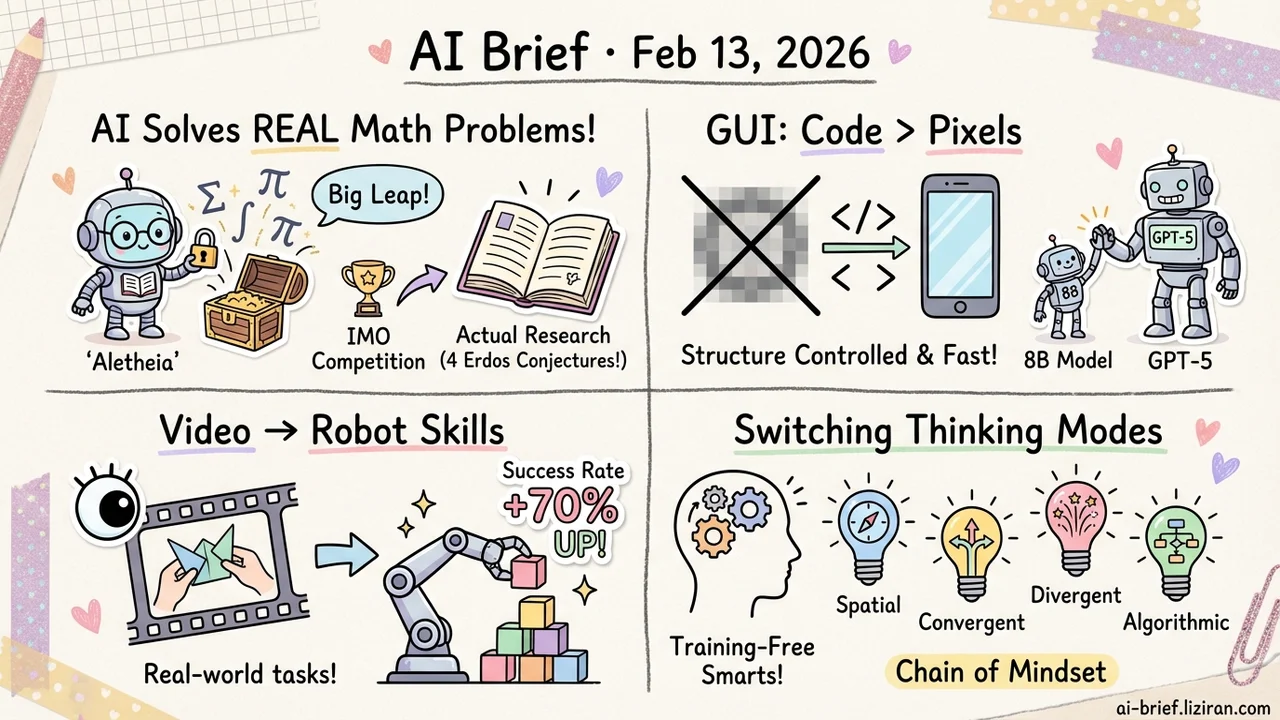

- AI just solved real open problems in mathematics for the first time. Google DeepMind's Aletheia agent autonomously cracked 4 unsolved questions from the Erdos Conjectures database.

- GUI world models don't need pixel-level prediction. Code2World turns next-state forecasting into code generation — an 8B model rivals GPT-5.

- Learning control policies straight from video. VideoWorld 2 improves task success rate by up to 70% on real-world handcraft tasks, and the knowledge transfers to robot manipulation.

- A training-free reasoning framework that switches cognitive modes per step. Chain of Mindset beats the strongest baseline by nearly 5% across six benchmarks.

Featured

01 AI for Science AI Is Doing Math Research Now, Not Just Math Homework

Hitting IMO gold-medal level is old news. The leap from "solving competition problems" to "doing actual research" is a different beast — it requires literature search, long-horizon proof construction, and exploration in unknown spaces.

Google DeepMind's Aletheia is a math research agent built on an enhanced version of Gemini Deep Think. Its core loop is generate-verify-revise, end-to-end, with intensive tool use. The headline results: a research paper entirely produced by AI (computing structure constants in arithmetic geometry), a human-AI collaborative proof on particle system bounds, and a semi-autonomous sweep of 700 open problems in the Erdos Conjectures database — 4 of which were solved autonomously.

The team also proposes a framework for grading autonomy and novelty in AI-assisted mathematical results. That they felt the need to formalize this says everything about where things are headed. The capability boundary for AI agents is shifting from "solve known problems" to "explore unknown ones."

Key takeaways: - The jump from competition-level to research-level reasoning depends on tool use and long-horizon verification loops - 4 genuinely open math problems solved by AI — real academic results, not benchmark numbers - Proposed autonomy/novelty grading standards signal this will become routine

Source: Towards Autonomous Mathematics Research

02 Agent GUI Agents Need to Predict What Happens Next — Pixels Won't Cut It

For a GUI agent to be useful, it needs to anticipate the result of each action — click this button, what does the screen look like? Existing approaches either describe the next state in text (not precise enough) or generate it pixel by pixel (structurally uncontrollable).

Code2World reframes the problem: instead of predicting pixels, generate the HTML code that renders the next screen. The team built a visual-feedback correction pipeline to translate 80K+ GUI trajectories into high-fidelity HTML training data, then applied render-aware RL — using the actual rendered output as the reward signal.

The 8B model matches GPT-5 and Gemini-3-Pro-Image on UI prediction. More practically, plugging Code2World into Gemini-2.5-Flash for lookahead prediction boosts AndroidWorld navigation success rate by 9.5%. Code is open-source.

Key takeaways: - UI prediction shifts from pixel generation to code generation — structurally controllable and higher fidelity - Rendered output as RL reward is a clever closed-loop training design - 8B model, open-source, and directly integrable as a plug-in

Source: Code2World: A GUI World Model via Renderable Code Generation

03 Robotics Can Manipulation Knowledge From YouTube Transfer to Robots?

Learning to act by watching video sounds compelling but is brutally hard in practice: actions are implicit, and visual appearance varies wildly across scenes.

VideoWorld 2 tackles this by decoupling action dynamics from visual appearance. A pretrained video diffusion model handles appearance; a dedicated latent dynamics model (dLDM) learns only compact, task-relevant action encodings. These encodings are then modeled autoregressively for policy learning and long-horizon planning.

On real-world handcraft tasks — the kind where previous video generation and latent dynamics models reliably fail — task success rate improves by up to 70%. The cross-domain transfer is the real story: manipulation knowledge learned from the Open-X dataset significantly boosts performance on CALVIN robot manipulation tasks. Code and models will be open-sourced.

Key takeaways: - Decoupling dynamics from appearance is the key design for learning control from unlabeled video - 70% success rate improvement on real handcraft tasks, not simulated environments - Cross-domain transfer to robot manipulation validates generality

Source: VideoWorld 2: Learning Transferable Knowledge from Real-world Videos

04 Reasoning Different Reasoning Steps Need Different Thinking Modes

Humans naturally switch cognitive modes when solving complex problems — spatial visualization, divergent brainstorming, logical convergence, algorithmic execution. Different steps engage different mental faculties. But existing LLM reasoning methods, including all CoT variants, apply the same mode uniformly across every step.

Chain of Mindset (CoM) is a training-free agentic framework that decomposes reasoning into four heterogeneous cognitive modes (Spatial, Convergent, Divergent, Algorithmic). A Meta-Agent dynamically selects the optimal mode based on the evolving reasoning state, with a bidirectional context gate controlling information flow between modules.

Across six benchmarks spanning math, code, scientific QA, and spatial reasoning, CoM beats the strongest baseline by 4.96% on Qwen3-VL-32B and 4.72% on Gemini-2.0-Flash while maintaining reasoning efficiency. No training required — plug and play.

Key takeaways: - "Different steps need different thinking modes" is now quantitatively validated - Training-free agentic framework, directly applicable to existing models - Consistent gains across six diverse benchmarks — not a single-task trick

Source: Chain of Mindset: Reasoning with Adaptive Cognitive Modes

Also Worth Noting

Today's Observation

The standout trend today is a concentrated emergence of world models — Code2World for GUI state prediction, VideoWorld 2 for video dynamics modeling, Olaf-World for cross-scene action transfer, Agent World Model for synthetic environment generation. Different methods, same thesis: agents that operate in the real world need an internal simulator to anticipate the consequences of their actions. If you're building agent products, world models are worth tracking seriously. They're transitioning from academic concept to core agent infrastructure.