Today's Overview

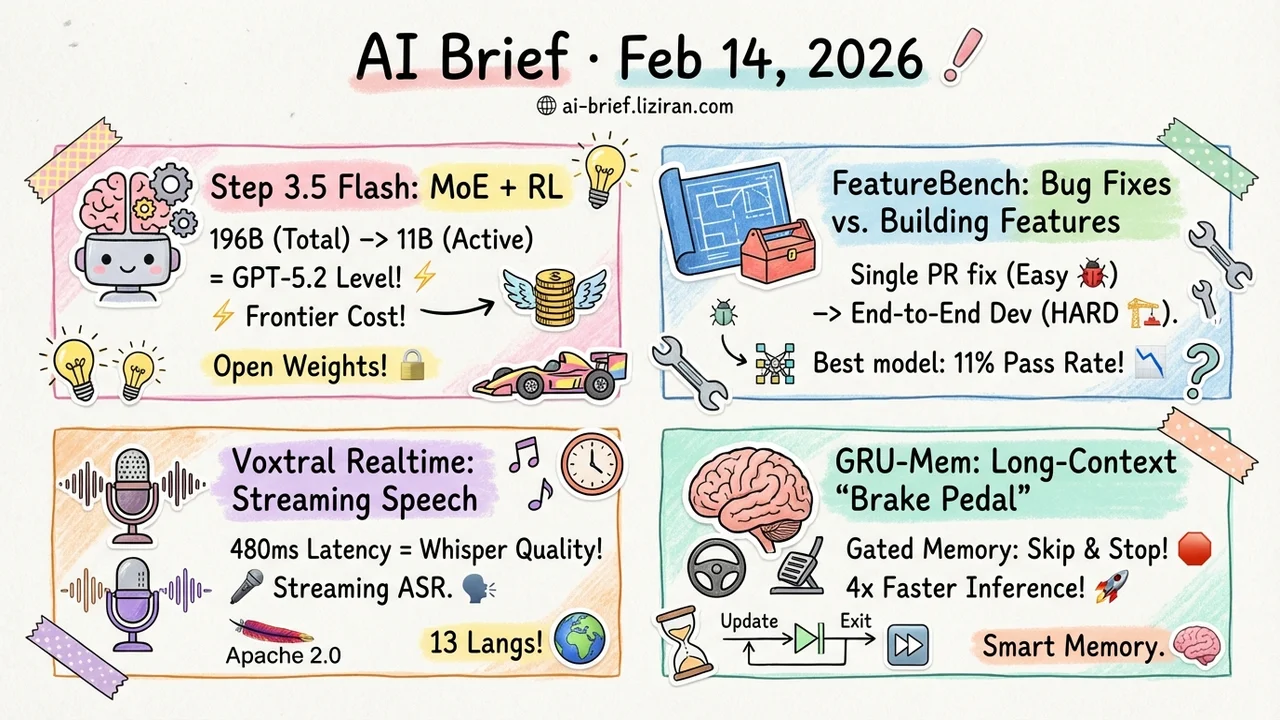

- 196B parameters, only 11B active, and it matches GPT-5.2. Step 3.5 Flash uses MoE + RL to push agent efficiency to a new frontier — with open weights.

- Coding agents can fix bugs, but can they build features? FeatureBench upgrades evaluation from single-PR fixes to end-to-end feature development. The best model passes just 11%.

- Mistral releases Voxtral Realtime, a streaming speech recognition model. 480ms latency matching Whisper's offline transcription quality. Apache 2.0.

- Long-context reasoning gets a "brake pedal." GRU-Mem uses gated mechanisms so agents know when to update memory and when to stop — up to 4x faster inference.

Featured

01 Agent 11B Active Parameters, Frontier-Level Agent Tasks

The hardest part of deploying complex agents isn't intelligence — it's inference cost. Multi-turn interactions, tool calls, code execution — every step burns tokens. Step 3.5 Flash packs frontier-level agent intelligence into a deployable cost envelope: 196B total parameters with only 11B active via MoE.

Two design choices matter: a 3:1 alternating sliding-window/full-attention pattern cuts multi-turn latency, and Multi-Token Prediction (3 tokens at once) accelerates generation. Training uses a hybrid RL framework combining verifiable signals with preference feedback, maintaining stable self-improvement at scale with off-policy data.

Hard numbers: IMO-AnswerBench 85.4%, LiveCodeBench-v6 86.4%, tau2-Bench 88.2% — matching GPT-5.2 xHigh and Gemini 3.0 Pro. For teams building agent products, "frontier capability" and "affordable inference" are no longer mutually exclusive.

Key takeaways: - MoE architecture makes frontier performance compatible with low inference cost. - MTP-3 and hybrid attention are purpose-built for multi-turn agent interaction. - Open weights, ready to deploy.

Source: Step 3.5 Flash: Open Frontier-Level Intelligence with 11B Active Parameters

02 Code Intelligence Fixing Bugs ≠ Building Features

A 74% solve rate on SWE-bench sounds impressive, but the task is basically "here's a bug in a PR, fix it" — clear scope, limited changes. Real feature development is different: spanning multiple commits, touching cross-file dependencies, and not breaking anything else in the process.

FeatureBench (ICLR 2026) tests exactly this. Starting from unit tests in real repositories, it traces dependency graphs to construct feature-level tasks spanning multiple commits and PRs. All 200 tasks come with executable test environments. The results are stark: Claude 4.5 Opus goes from 74.4% on SWE-bench to just 11.0% on FeatureBench. The task generation pipeline is fully automated, continuously buildable from new repos, and naturally resistant to data leakage.

Key takeaways: - The capability cliff from "fix a bug" to "build a feature" is now quantified. - Automated task construction keeps the benchmark evergreen and leak-proof. - Teams investing in coding agents need to rethink their evaluation standards.

Source: FeatureBench: Benchmarking Agentic Coding for Complex Feature Development

03 Multimodal Streaming ASR Without Sacrificing Quality

Speech-to-text has always forced a tradeoff: go real-time by chunking audio into an offline model (quality drops), or wait for the full recording (latency spikes). Voxtral Realtime is Mistral's natively streaming ASR model — not an offline model chopped into chunks, but end-to-end trained on the alignment between audio and text streams.

The architecture uses a Delayed Streams Modeling framework with a causal audio encoder and Ada RMS-Norm for latency conditioning, pretrained across 13 languages. Core result: Whisper-level transcription quality at 480ms latency. Apache 2.0 license. For teams building voice applications, the old "pick one: real-time or accurate" problem just got solved.

Key takeaways: - Native streaming training vs. chunked offline models — the difference is alignment quality. - 480ms latency + Whisper-grade accuracy makes real-time use cases viable. - Apache 2.0, 13 languages, low deployment barrier.

Source: Voxtral Realtime

04 Reasoning The Most Important Skill for Long-Context Agents: Knowing When to Stop

Long-context reasoning agents need to process information chunk by chunk while maintaining memory. Naive recurrent memory has two failure modes: stuffing irrelevant content into memory (memory bloat), and continuing to loop after finding the answer (wasted compute).

GRU-Mem borrows from GRU (Gated Recurrent Units) and adds two gates to text memory — an "update gate" that skips memory writes when a chunk contains no useful evidence, and an "exit gate" that terminates the loop once enough evidence is collected. Both gates are trained end-to-end via RL with dedicated reward signals. Result: outperforms MemAgent across multiple long-context reasoning tasks, with inference speedups up to 4x.

Key takeaways: - More memory isn't better — selective updates and timely exits are what matter for long-context reasoning. - GRU-style gating transferred from sequence modeling to agent memory management — elegant move. - The 4x speedup comes from skipping irrelevant chunks and early stopping, not lossy compression.

Source: When to Memorize and When to Stop: Gated Recurrent Memory for Long-Context Reasoning

Also Worth Noting

Today's Observation

Two signals worth reading together: Step 3.5 Flash matches frontier models on agent tasks, while FeatureBench and GameDevBench say "not so fast — shift the evaluation axis and scores collapse." Agent capability and evaluation standards are leveling up in tandem — models are getting better, and the field is finding more things they can't do. Teams building coding agent products should pay particular attention: from single-PR fixes to end-to-end feature development, from pure code to multimodal assets, these evaluation directions that better reflect real workflows are redefining what "good enough" means.