Today's Overview

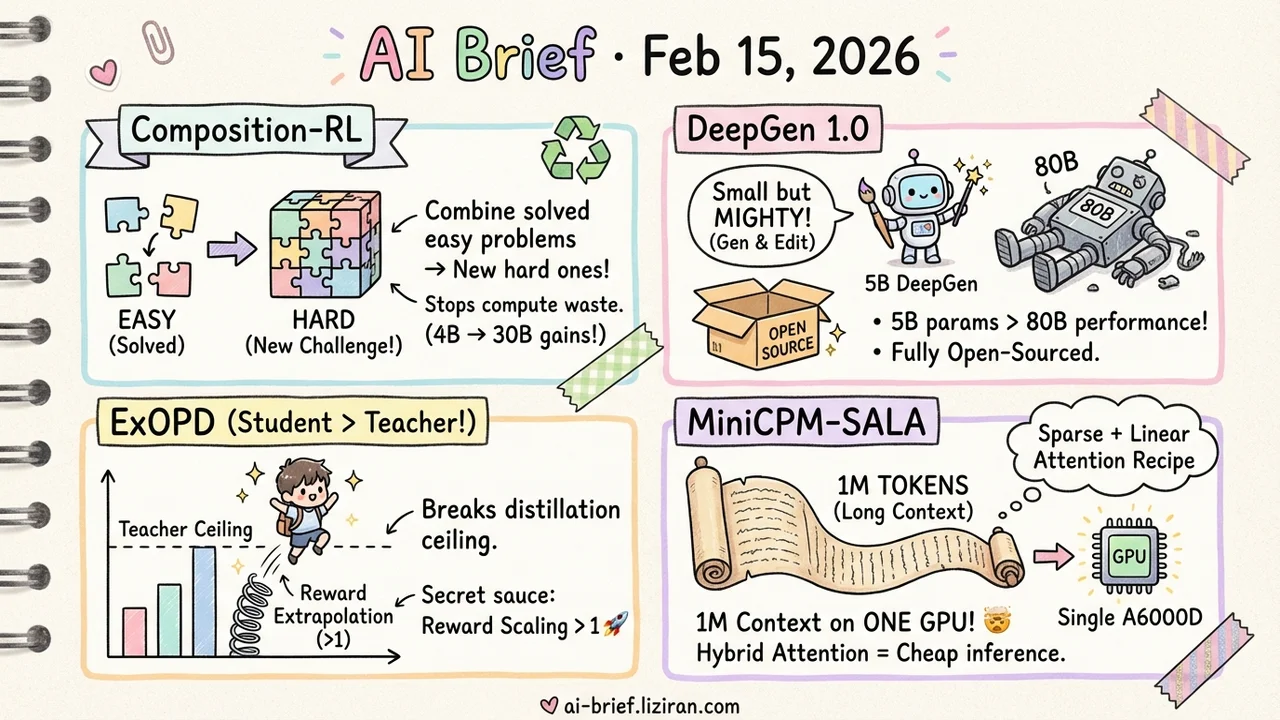

- Combine solved easy problems into new hard ones. Composition-RL turns wasted RLVR training samples into effective composite challenges, with consistent gains from 4B to 30B models.

- 5B parameters doing the job of 80B. DeepGen 1.0 beats opponents 10x its size in both image generation and editing — code and weights fully open-sourced.

- Students can surpass their teachers. ExOPD breaks the distillation performance ceiling through "reward extrapolation," and multi-domain expert knowledge can be merged back into a single small model.

- 1M-token context on a single A6000D. MiniCPM-SALA's sparse + linear attention hybrid cuts long-context inference cost to a third.

Featured

01 Training Run Out of RL Data? Combine Easy Problems Into Hard Ones

RLVR (reinforcement learning with verifiable rewards) works great for training reasoning ability, but there's a practical problem: training problems are finite. As pass rates climb, a growing pile of "already solved" problems contributes nothing to learning — pure waste of compute. Previous approaches prioritize hard problems, but that leaves easy ones sitting idle.

Composition-RL takes the direct approach: automatically combine multiple easy problems into a single composite challenge. Each sub-problem is independently verifiable, so you get free new training samples out of what was essentially dead data. Consistent improvements across 4B to 30B models, with a curriculum variant (gradually increasing composition depth) performing even better.

The practical bonus: this naturally supports cross-domain composition. Combine math and code problems in the same training sample, and the model's cross-domain reasoning benefits too.

Key takeaways: - Solves the late-stage RLVR problem where easy problems pile up and waste compute - Automatic composition with verifiable answers — no manual problem creation needed - Cross-domain composition is a free bonus, covering multiple capabilities in one training framework

Source: Composition-RL: Compose Your Verifiable Prompts for Reinforcement Learning of Large Language Models

02 Image Gen How Does 5B Beat 80B?

Unified image generation + editing models are the trend, but current approaches demand 10B+ parameters, making training and deployment expensive. DeepGen 1.0 has just 5B parameters yet delivers strong results in both generation and editing: 28% ahead of the 80B HunyuanImage on WISE, 37% ahead of the 27B Qwen-Image-Edit on UniREditBench.

The core design is Stacked Channel Bridging — extracting hierarchical features from multiple VLM layers, fusing them with learnable "think tokens," and feeding structured reasoning guidance to the generative backbone. Training follows three stages: alignment pretraining, joint fine-tuning, then GRPO reinforcement learning with mixed rewards. Total training data: only ~50M samples.

Code, weights, and datasets are all open-sourced. For teams that want to build unified generation models but can't afford massive compute, this is a ready-made starting point.

Key takeaways: - 5B parameters outperforms models an order of magnitude larger in both generation and editing - Three-stage training + GRPO reinforcement learning is the key recipe - Fully open-sourced, lowering the barrier to unified multimodal generation

Source: DeepGen 1.0: A Lightweight Unified Multimodal Model for Advancing Image Generation and Editing

03 Training Can a Distilled Student Surpass Its Teacher? Yes — If You Extrapolate

The ceiling of model distillation is usually the teacher's performance — students can approach it but never exceed it. G-OPD (Generalized On-Policy Distillation) reframes distillation as dense KL-constrained RL, then discovers a key lever: the reward scaling factor.

Standard distillation treats the reward signal and KL constraint equally (scaling factor = 1). Crank it above 1 — what the authors call ExOPD (reward extrapolation) — and the student breaks through the teacher's performance ceiling. In a particularly useful scenario, merging knowledge from different domain-expert models back into the original student, ExOPD enables the student to surpass every domain expert simultaneously.

For teams doing model compression or knowledge fusion, this "scaling factor > 1" trick is well worth trying.

Key takeaways: - Distillation = dense KL-constrained RL — this unified view opens up a new tuning space - Reward extrapolation (scaling factor > 1) makes student-surpasses-teacher possible - Merging multi-domain expert knowledge back into a small model has strong practical value

Source: Learning beyond Teacher: Generalized On-Policy Distillation with Reward Extrapolation

04 Architecture 1M Tokens on a Consumer GPU — Long Context Doesn't Require a Cluster

Full-attention 8B models already hit memory walls at 256K tokens. 1M tokens? Not happening. MiniCPM-SALA (Tsinghua) mixes attention mechanisms: 1/4 of layers use sparse attention (InfLLM-V2) to preserve precise long-range modeling, 3/4 use linear attention (Lightning Attention) to cut global computation overhead, with hybrid positional encoding handling the different attention mechanisms.

The practical part: you don't need to train from scratch. A continual training framework converts existing Transformer models into the hybrid architecture at just 25% of the from-scratch cost. On a single NVIDIA A6000D, 256K-token inference runs 3.5x faster than full attention, with support up to 1M tokens.

Key takeaways: - 1:3 sparse + linear attention hybrid is a cost-effective recipe for long context - Continual training from existing models costs only 25% of training from scratch - Million-token context on a single GPU lowers the deployment bar for long documents and conversations

Source: MiniCPM-SALA: Hybridizing Sparse and Linear Attention for Efficient Long-Context Modeling

Also Worth Noting

Today's Observation

Three of today's featured papers attack the same problem: how to squeeze more signal from limited data in RL training. Composition-RL combines easy problems into composite challenges. ExOPD uses reward extrapolation to push distillation past the teacher ceiling. Length-Incentivized Exploration uses length rewards to encourage deeper exploration. Three paths, one goal: increasing the marginal return of every training sample. Teams doing RL post-training should consider running all three head-to-head — especially Composition-RL's cross-domain composition paired with ExOPD's multi-expert merging, which could compound into something bigger.