Today's Overview

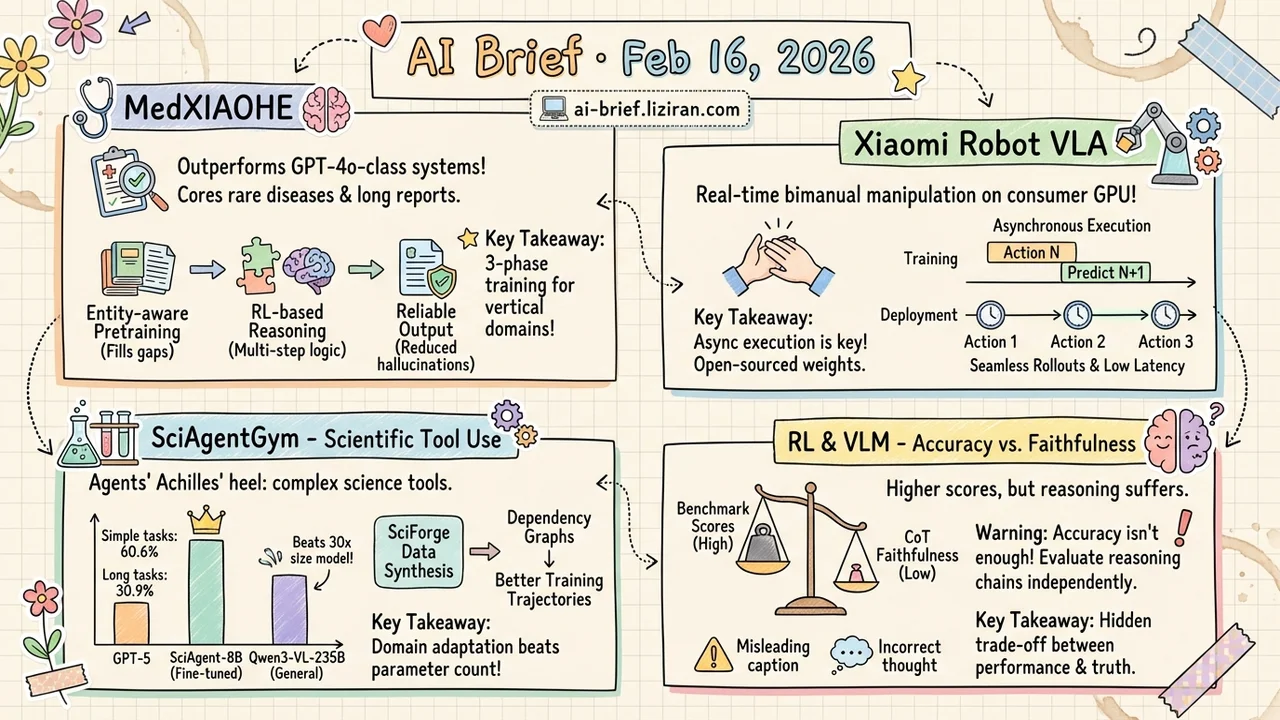

- A medical multimodal model now outperforms GPT-4o-class closed-source systems. MedXIAOHE chains entity-aware pretraining with RL-based reasoning to cover everything from rare diseases to long-form report generation.

- Xiaomi open-sources a robot VLA model that runs real-time bimanual manipulation on a consumer GPU. The key is asynchronous execution baked into training, not just deployment.

- Scientific tool use is agents' Achilles' heel. SciAgentGym stress-tests 1,780 domain tools — and an 8B fine-tuned model beats a 235B general-purpose one.

- RL fine-tuning boosts VLM benchmark scores, but chain-of-thought faithfulness degrades — surfacing a hidden accuracy-vs-reliability trade-off.

Featured

01 Multimodal What a Full-Stack Medical AI Looks Like

Medical multimodal models face a unique triple bind: broad knowledge coverage (thousands of rare diseases can't be missed), deep reasoning (complex diagnoses require multi-step logic), and reliable output (long reports can't hallucinate). Previous models typically excelled at one or two of these. MedXIAOHE attacks them in stages.

First, entity-aware continual pretraining organizes heterogeneous medical corpora by entity, filling long-tail knowledge gaps. Then RL and tool-augmented training teach multi-step diagnostic reasoning with verifiable decision traces. Finally, user-preference alignment and evidence anchoring keep hallucinations in check.

The result outperforms leading closed-source multimodal systems across multiple medical benchmarks. The three-phase recipe — knowledge expansion, reasoning reinforcement, reliability guardrails — is a transferable playbook for vertical-domain multimodal development.

Key takeaways: - Entity-aware pretraining solves long-tail medical knowledge coverage - RL plus tool augmentation enables verifiable multi-step diagnostic reasoning - The three-phase training framework generalizes to other specialized domains

Source: MedXIAOHE: A Comprehensive Recipe for Building Medical MLLMs

02 Robotics Xiaomi Runs Real-Time Robot Control on a Consumer GPU

VLA models (vision-language-action) have a deployment-killing problem: inference latency. If generating the next action takes longer than the control cycle, the robot stutters or loses control. Xiaomi-Robotics-0 solves this with asynchronous execution — the model learns during training to predict the next action while executing the current one, and at deployment, consecutive action chunks are carefully timestamp-aligned for seamless rollouts.

The model is pretrained on large-scale cross-embodiment trajectories for general action generation, then post-trained for target tasks. In practice, it handles precise bimanual manipulation on consumer-grade GPUs. Code and weights are open-sourced.

For teams trying to deploy VLA on real hardware, this async-train-then-align-deploy approach is more practical than just scaling up the model.

Key takeaways: - Asynchronous execution designed into training eliminates the inference latency bottleneck - Consumer GPU deployable — lowers the hardware barrier for robot AI - Code and weights open-sourced for direct reproduction

Source: Xiaomi-Robotics-0: An Open-Sourced Vision-Language-Action Model with Real-Time Execution

03 Agent Science Agents Get Lost After a Few Steps

Having AI agents run experiments and analyses for scientists sounds great. Reality: scientific workflows involve many domain-specific tools and long multi-step chains, and current agents are bad at this. SciAgentGym runs a systematic stress test — four natural science disciplines, 1,780 domain tools, tiered evaluation from single-step to long-chain workflows.

GPT-5 hits 60.6% success on simple tasks but drops to 30.9% as workflows get longer. The interesting part is the proposed SciForge data synthesis method: it models tool-call relationships as dependency graphs to generate training trajectories. The resulting SciAgent-8B outperforms Qwen3-VL-235B — a model 30x its size — and shows positive cross-domain transfer.

The bottleneck for science agents isn't model size. It's whether training data teaches the model to understand logical dependencies between tools.

Key takeaways: - Multi-step scientific tool use is a systematic failure mode for current agents - Dependency-graph-aware data synthesis is the key breakthrough - An 8B fine-tuned model beating 235B proves domain adaptation outweighs parameter count

Source: SciAgentGym: Benchmarking Multi-Step Scientific Tool-use in LLM Agents

04 Training The Hidden Cost of RL Fine-Tuning: Scores Go Up, Reasoning Breaks Down

RL fine-tuning makes VLMs score higher on visual reasoning benchmarks. But someone checked the reasoning chains themselves. The findings are not encouraging: simple textual perturbations — a misleading caption, an incorrect CoT — cause large performance drops. The deeper issue is an accuracy-faithfulness trade-off created by RL fine-tuning: benchmark scores rise while CoT alignment with actual visual evidence falls.

Two fixes were attempted. Adversarial augmentation improves robustness but doesn't stop faithfulness drift. A faithfulness-aware reward restores alignment, but combined with adversarial augmentation, the model learns shortcut strategies instead.

A warning for every team doing VLM RL fine-tuning: accuracy alone isn't enough. Chain-of-thought faithfulness needs independent evaluation.

Key takeaways: - RL fine-tuning of VLMs creates a hidden accuracy-faithfulness trade-off - Adversarial augmentation and faithfulness rewards each have limitations — no silver bullet yet - Evaluating RL fine-tuning should include reasoning chain quality, not just accuracy

Source: On Robustness and Chain-of-Thought Consistency of RL-Finetuned VLMs

Also Worth Noting

Today's Observation

A clear signal today: AI is accelerating from the general-capability race into vertical-domain depth. Medical diagnosis (MedXIAOHE), scientific experimentation (SciAgentGym), robot manipulation (Xiaomi-Robotics-0), geographic reasoning (GeoAgent) — four completely different domains, but the approach is strikingly consistent: reorganize training data and reward signals around domain-expert knowledge structures. SciAgent-8B beating a 235B general model with dependency-graph synthetic data is especially telling — vertical data engineering may outperform parameter scaling. Teams building industry applications should re-examine their data assets. Turning domain know-how into training signal may matter more than picking the right foundation model.