Today's Overview

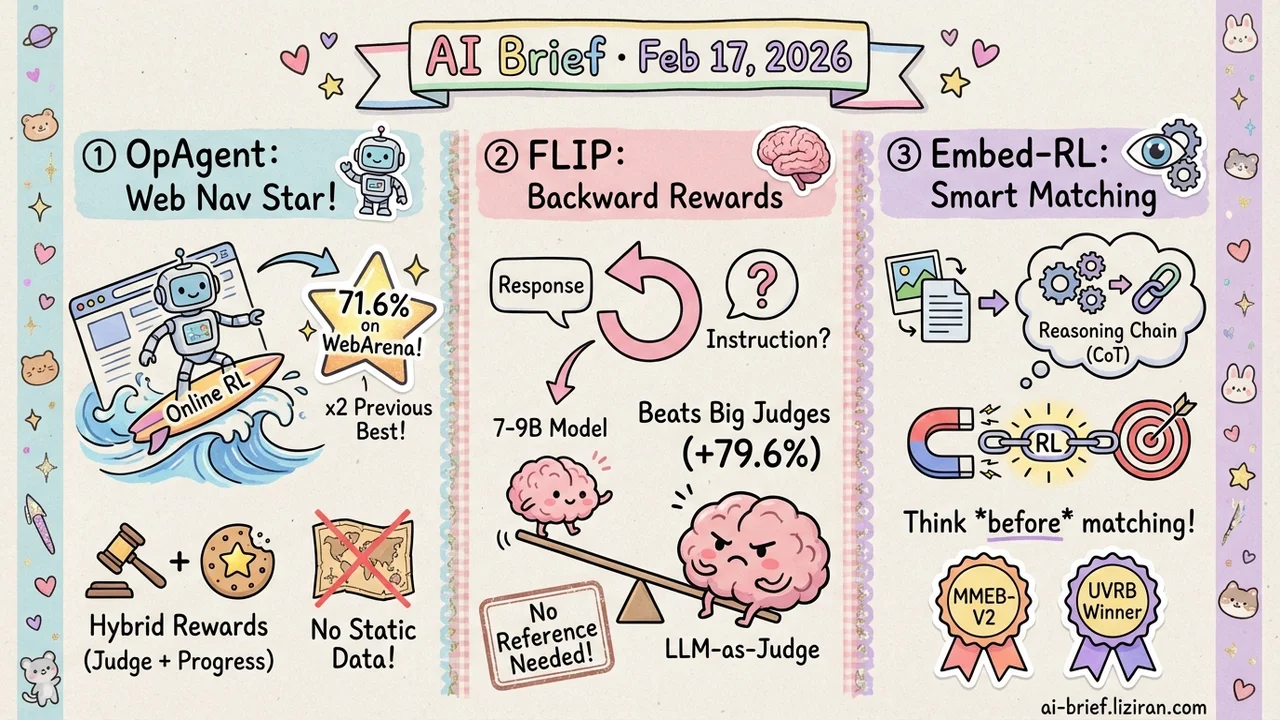

- Online RL finally works for web navigation agents. OpAgent hits 71.6% on WebArena — more than doubling every previous monolithic baseline.

- Reward models don't have to judge forward. FLIP infers backward to the instruction, and a 7–9B model beats LLM-as-Judge by 79.6%.

- RL isn't just for alignment anymore — it now trains embedding reasoning chains. Embed-RL teaches cross-modal retrieval to "think before matching."

Featured

01 Agent Web Agents Finally Learn on Live Websites

Getting AI to operate web pages — fill forms, place orders, look up information — sounds straightforward. In practice, real websites are wildly dynamic, and agents trained offline fall apart the moment they go live. The core issue is distribution shift: static datasets can't simulate real-time state changes and feedback.

OpAgent skips the workaround and trains directly on live websites. A hierarchical multi-task fine-tuning stage (planning, acting, and grounding trained separately) builds the foundation. Then online RL takes over in real web environments. The reward design is clever — a "WebJudge" evaluates task completion holistically, while a rule-based decision tree provides step-level progress rewards, solving credit assignment for long-horizon navigation.

The single-model version already hits 38.1% (pass@5), beating all monolithic baselines. Add the modular framework — Planner, Grounder, Reflector, Summarizer — and it reaches 71.6%. Previous best was around 30%. If you're building web automation, online RL looks far more promising than collecting more SFT data.

Key takeaways: - Online RL solves the distribution shift bottleneck of offline training - Hybrid rewards (outcome judgment + progress signals) are critical for long-horizon agents - 71.6% sets a new WebArena SOTA

Source: OpAgent: Operator Agent for Web Navigation

02 Training Reward Models That Look Backward, Not Forward

Reward models are a core component of LLM training and inference pipelines, but mainstream approaches either depend on large models as judges or require reference answers and rubrics. Both limit flexibility and accessibility. FLIP takes a different angle: given a response, instead of directly judging its quality, it infers what instruction would most plausibly produce that response, then measures how similar the inferred instruction is to the original. The closer the match, the more on-topic the answer.

This "backward inference" approach needs no reference answers, no scoring rubrics, and runs on 7–9B models. Across 13 small language models, FLIP outperforms LLM-as-Judge baselines by an average of 79.6%. In downstream applications — test-time scaling via parallel sampling and GRPO training — FLIP's reward signal delivers clear improvements. It's also more effective on long outputs and more robust against common reward hacking.

Key takeaways: - "Backward instruction inference" is a new reference-free reward modeling paradigm - 7–9B models can replace large-model judges - Naturally resistant to long-output degradation and reward hacking

Source: Small Reward Models via Backward Inference

03 Multimodal Cross-Modal Retrieval Learns to Reason Before Matching

Multimodal retrieval (image-to-text, text-to-image) traditionally encodes queries and targets into vectors and compares similarity. Recent work showed that adding a CoT reasoning step — letting the model analyze the query before generating an embedding — helps a lot. The problem: existing reasoning chains only analyze the query itself and are completely disconnected from the retrieval target. Reasoning and matching are decoupled.

Embed-RL fixes this with reinforcement learning. The Embedder (responsible for final matching) provides reward signals directly to the Reasoner (responsible for CoT generation), ensuring the reasoning chain actually serves the retrieval task. It also introduces T-CoT (Traceability Chain-of-Thought), which extracts key visual and textual cues from multimodal inputs as retrieval evidence. Under limited compute, the framework outperforms prior state-of-the-art embedding models on both MMEB-V2 and UVRB benchmarks.

Key takeaways: - Aligning reasoning chains with retrieval targets is the key improvement - RL makes embedding models' reasoning chains genuinely serve the matching task - Cross-modal retrieval is shifting from "encode directly" to "reason then encode"

Source: Embed-RL: Reinforcement Learning for Reasoning-Driven Multimodal Embeddings

Also Worth Noting

Today's Observation

An interesting convergence today: RL is infiltrating parts of the LLM pipeline that were previously considered "no RL needed." OpAgent applies RL to online policy learning for web agents. Embed-RL applies RL to reasoning chain optimization for embedding models. FLIP doesn't use RL itself but its output directly serves GRPO training. RL is no longer just an alignment-stage tool — it's becoming a general-purpose method for improving any AI module with a clear feedback signal. Teams working on search, recommendations, or automation should reassess which parts of their pipeline could benefit from RL-driven optimization.