Today's Overview

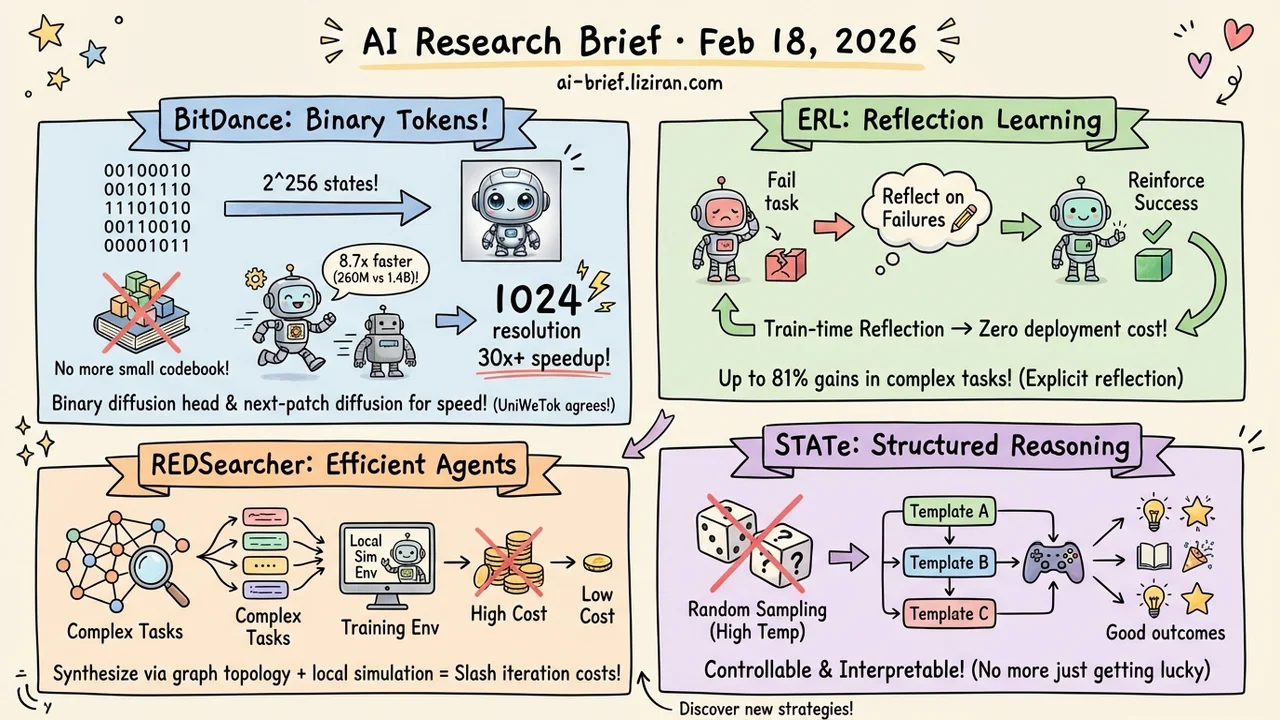

- Binary tokens replace codebook indices. BitDance matches 1.4B-parameter models with 260M parameters, runs 8.7x faster, and hits 30x+ speedup at 1024 resolution.

- RL training feedback too sparse for models to learn? ERL adds explicit reflection on failures before reinforcing successes, with gains up to 81% in complex environments.

- Training data for search agents is expensive and hard to build. REDSearcher synthesizes complex tasks via graph topology and pairs them with a local simulation environment to slash RL iteration costs.

- Inference-time compute still relies on high-temperature sampling to get lucky? STATe replaces random sampling with structured reasoning templates — more controllable and more interpretable.

Featured

01 Image Gen Binary Tokens Make Image Generation Fast and Good

Autoregressive (AR) image generation typically encodes images into discrete tokens via a codebook. But codebook size is limited, and so is expressiveness. BitDance takes a different approach: predict binary visual tokens directly. Each token can represent up to $2^256$ states — orders of magnitude more flexible than traditional codebooks.

The catch: you can't use softmax classification over a space that large. BitDance solves this by embedding a binary diffusion head inside the AR framework, using continuous-space diffusion to generate binary tokens. They also introduce next-patch diffusion — predicting multiple tokens in parallel — for a massive inference speedup.

On ImageNet 256x256, BitDance achieves FID 1.24 (best among AR models). With 260M parameters it matches a 1.4B parallel AR model at 8.7x the speed. At 1024x1024 resolution, the speedup exceeds 30x. The same day, UniWeTok independently published a similar bet — a $2^128$ binary codebook for unified multimodal tokenization, hitting SOTA on both generation and understanding. Two independent teams converging on the same idea is a strong signal.

Key takeaways: - Binary tokens break the codebook capacity bottleneck with exponentially richer representations - Diffusion heads solve the sampling problem for ultra-large discrete spaces - Two independent papers betting on binary tokens the same day — the trend is clear

Source: BitDance: Scaling Autoregressive Generative Models with Binary Tokens

02 Training Let Models Reflect on Failure Before Reinforcing Success

RL training for language models has a persistent problem: environmental feedback is sparse and delayed. The model knows it failed but has no idea what to fix — so it relies on implicit trial-and-error, which is slow.

Experiential Reinforcement Learning (ERL) adds an explicit experience-reflection-consolidation loop. The model makes an attempt, receives feedback, then generates a reflection analyzing what went wrong. It uses this reflection to make a second attempt. If the second attempt succeeds, that corrected behavior gets reinforced and internalized into the base policy.

The key design choice: reflection is only used during training. At deployment, no extra inference steps are needed — zero additional cost. In complex multi-step control environments, ERL improves performance by up to 81%. On tool-use reasoning tasks, gains reach 11%.

Key takeaways: - Explicit reflection converts sparse feedback into structured behavioral correction - Train-time reflection with zero inference overhead — the best of both worlds - Especially effective for long-horizon tasks and sparse reward settings

Source: Experiential Reinforcement Learning

03 Agent The Search Agent Bottleneck Isn't the Model — It's Data and Environment

Training deep search agents faces two cost bottlenecks. First, constructing complex search tasks is extremely labor-intensive. Second, every training trajectory requires heavy external tool calls, making rollout costs prohibitive.

REDSearcher addresses both systematically. For task synthesis, it frames the problem as dual-constrained optimization — using knowledge graph topology to control task difficulty and evidence dispersion to control retrieval complexity, enabling large-scale automatic generation of difficulty-calibrated search tasks. During mid-training, it strengthens three atomic capabilities — knowledge, planning, and function calling — which drastically reduces the cost of collecting high-quality trajectories downstream. Finally, it builds a local simulated search environment that makes RL iteration fast and affordable.

The result: SOTA on both text and multimodal search agent benchmarks. The team also open-sources 10K text and 5K multimodal search trajectories.

Key takeaways: - Graph topology + evidence dispersion is an effective lever for synthesizing complex search tasks at scale - Mid-training on atomic capabilities significantly cuts downstream RL data costs - Local simulation environments make search agent RL iteration tractable

Source: REDSearcher: A Scalable and Cost-Efficient Framework for Long-Horizon Search Agents

04 Reasoning Structured Templates Beat Random Sampling for Inference-Time Compute

Tree-of-Thoughts and similar inference-time compute (ITC) methods need diverse candidate solutions. In practice, the main lever is cranking up sampling temperature — but "random" doesn't mean "diverse." Many candidates just rephrase the same reasoning.

STATe-of-Thoughts takes a fundamentally different approach. Instead of stochastic sampling, it encodes high-level reasoning choices as discrete, structured action templates. A controller selects a reasoning strategy, a generator produces steps conditioned on that strategy, and an evaluator scores candidates to guide the search.

This yields better diversity than temperature sampling. More importantly, because each reasoning path maps to an explicit action sequence, you can analyze which strategies tend to produce high-quality results — and even discover unexplored but promising regions of the reasoning space and navigate toward them. The biggest value may not be today's benchmark numbers, but that it makes ITC interpretable, analyzable, and steerable.

Key takeaways: - Structured action templates produce more meaningful reasoning diversity than temperature sampling - Interpretable reasoning paths enable strategy-level optimization - A path from "getting lucky" to "having a strategy" for inference-time compute

Source: STATe-of-Thoughts: Structured Action Templates for Tree-of-Thoughts

Also Worth Noting

Today's Observation

The most striking signal today is the simultaneous emergence of binary tokens in visual representation. BitDance and UniWeTok — two independent teams — both chose massive binary codebooks to replace traditional VQ. BitDance for AR image generation, UniWeTok for unified multimodal understanding and generation. When two teams who don't know about each other converge on the same approach, it's usually not coincidence — it means the field has reached that fork in the road. If you work on image or multimodal generation, binary tokenizers are worth watching closely. They may redefine how visual tokens are designed.