Today's Overview

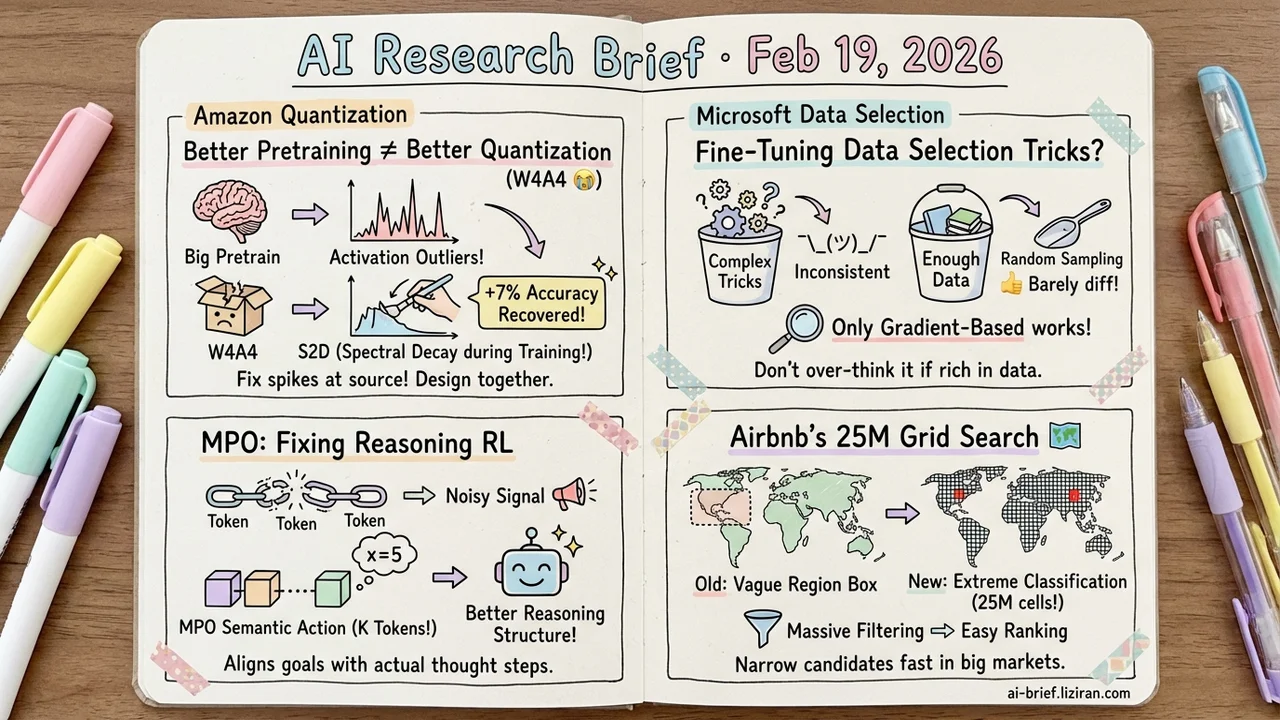

- Better pretraining makes quantization worse. Amazon finds activation outlier severity scales with pretraining duration. S2D applies spectral decay during training to fix the root cause, recovering up to 7% accuracy under W4A4.

- Most fine-tuning data selection tricks are a waste of effort. Microsoft Research systematically disentangles the components and finds only gradient-based representations reliably predict downstream performance. With enough data, careful selection barely beats random.

- Token-level policy gradients fundamentally mismatch reasoning granularity. MPO packs consecutive K tokens into semantic actions for policy gradient, aligning the optimization target with actual reasoning structure.

- Airbnb reformulates geo-retrieval as extreme classification over 25 million grid cells. This drastically narrows the candidate set before ranking, tackling the heterogeneity problem inherent in two-sided marketplaces.

Featured

01 Efficiency Better Pretraining Makes Quantization Worse

A set of experiments from Amazon reveals a consistent pattern: from CLIP to SigLIP to SigLIP2, more pretraining means worse activation outliers and steeper accuracy drops after quantization. The root cause traces to a few dominant singular values in the weight matrices — longer pretraining amplifies them, widening the activation range and making quantization damage uncontrollable.

S2D (Selective Spectral Decay) applies targeted regularization to these dominant singular values during fine-tuning, compressing the activation range at the source rather than patching things up at quantization time. The results are solid: up to 7% accuracy recovery on ImageNet under W4A4, another 4% on top when combined with QAT, and improvements that hold across downstream tasks and vision-language models.

The practical implication: quantization strategy shouldn't be an afterthought after training is done. Regularization choices during training directly set the ceiling for quantization quality. If you're planning quantized deployment, design both together.

Key takeaways: - Pretraining scale correlates with quantization difficulty — a few dominant singular values in the weight spectrum amplify activation ranges - S2D applies spectral regularization during training instead of post-hoc quantization fixes, recovering up to 7% accuracy under W4A4 - Quantization strategy should be front-loaded into training, not bolted on after

Source: S2D: Selective Spectral Decay for Quantization-Friendly Conditioning of Neural Activations

02 Training Most Fine-Tuning Data Selection Tricks Don't Work

Carefully curating fine-tuning data for a target task — a crowded subfield with a flood of new methods, each claiming clever selection strategies. Microsoft Research systematically disentangles two core components: data representation and selection algorithm. The conclusion is sobering: only gradient-based representations reliably predict downstream performance across tasks and models. Everything else is inconsistent.

Even the best combination (gradient representation + greedy round-robin) only shows clear advantage in low-budget settings. Once you have enough data, careful selection and random sampling converge. Another overlooked issue: many papers never compare against zero-shot baselines, systematically inflating the reported gains.

Key takeaways: - Gradient-based representation is the only data selection signal that works reliably across tasks — other representations aren't worth the investment - With sufficient data budget, elaborate selection strategies yield near-zero marginal benefit over random - Before investing in targeted instruction selection, run a zero-shot baseline to check if it's worth the effort

Source: A Critical Look at Targeted Instruction Selection: Disentangling What Matters (and What Doesn't)

03 Reasoning Token-Level RL Optimizes at the Wrong Granularity

When training LLM reasoning with RL, the standard approach treats each token as an independent "action." But think about it — writing an equation or defining a variable is a single semantic decision, split across a dozen tokens that each get scored separately. The gradient signal is inevitably noisy.

MPO (Multi-token Policy Gradient Optimization) changes the granularity: it packs consecutive K tokens into a single semantic action for policy gradient, aligning the optimization target with how reasoning actually works. On math reasoning and code generation benchmarks, MPO outperforms standard token-level policy gradient baselines. This goes deeper than hyperparameter tuning — it challenges how the action space itself is defined when applying RL to reasoning.

Key takeaways: - Token-level optimization fundamentally mismatches reasoning granularity, producing noisy gradient signals - MPO groups consecutive K tokens as semantic action units, aligning policy gradients with reasoning structure - Teams doing reasoning RL should reconsider their action space definition

Source: Beyond Token-Level Policy Gradients for Complex Reasoning with Large Language Models

04 Retrieval Airbnb Turns Geo-Retrieval Into 25M-Class Classification

Airbnb's search system faces a classic two-sided marketplace retrieval problem: listings vary wildly across the globe, traveler preferences are equally diverse, and even the best ranking model is useless if the candidate set is wrong. Their previous approach used a deep Bayesian bandit to predict a rectangular retrieval region for filtering.

The new approach: partition the world map into 25 million uniform grid cells and use extreme classification to predict which cells contain listings most likely to be booked. It reframes the recommendation problem as massive-scale classification, dramatically narrowing the candidate set before ranking even begins. Real-world papers on two-sided marketplace retrieval systems at this scale are rare — useful reference for teams working on search and recommendation architecture.

Key takeaways: - Reformulates geo-retrieval from rectangular region prediction to extreme classification over 25 million grid cells - Core goal is filtering to high-booking-probability candidates before ranking - The extreme heterogeneity on both supply and demand sides is the key retrieval challenge in two-sided marketplaces

Source: High Precision Audience Expansion via Extreme Classification in a Two-Sided Marketplace

Also Worth Noting

Today's Observation

Three papers hit the same wall at different stages of the ML pipeline. S2D finds that quantization accuracy loss originates not at quantization time — a few dominant singular values in the weight spectrum planted the problem during pretraining. The instruction selection teardown reveals that data representation, selection algorithm, and other heavily researched design choices mostly don't matter; gradient representation is the only dimension that makes a difference. MPO points out that token-level action spaces are inherently mismatched with reasoning granularity, and switching to semantic-level actions immediately cleans up the policy gradient signal.

The shared lesson is blunt: optimizing at the wrong level of abstraction has a ceiling. When quantization drops accuracy, check the training-time spectral properties before tweaking the quantization scheme. When your fine-tuning data selection hits a plateau, run a random baseline and see if the gap is even real. When reasoning RL won't converge, reconsider the action space granularity. Don't rush to tune hyperparameters — first make sure you're optimizing at the right level.