Today's Overview

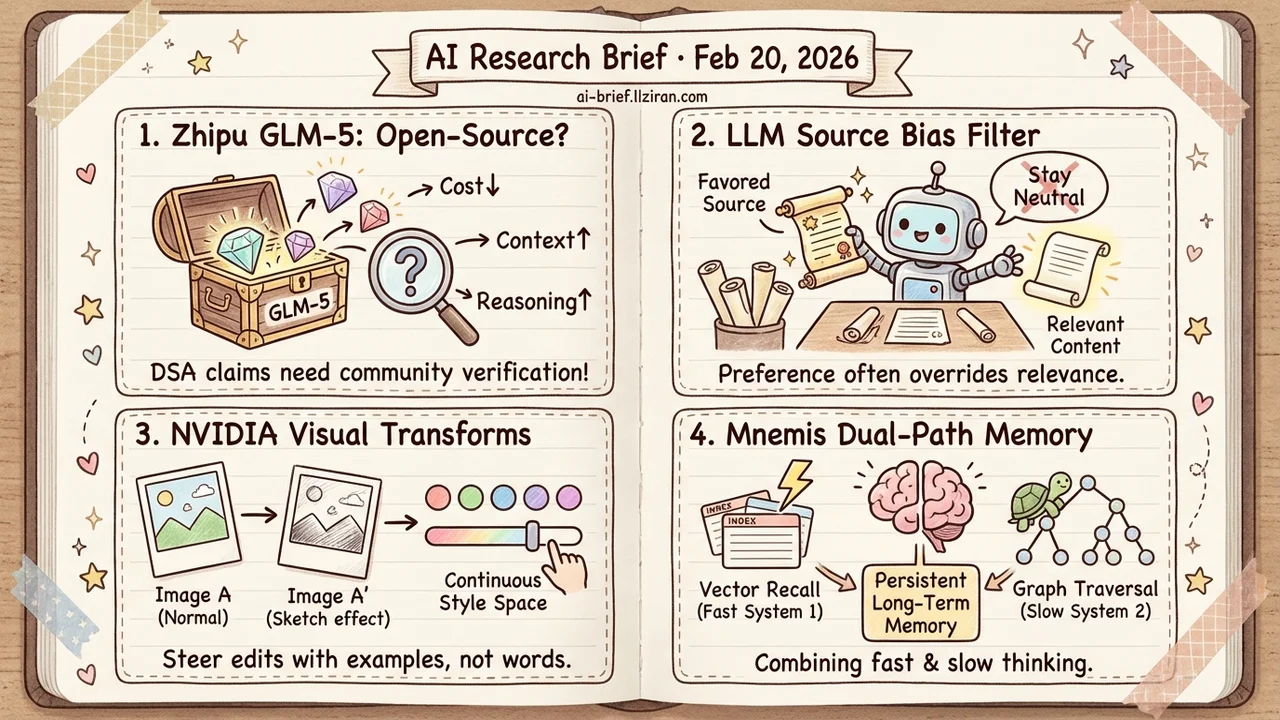

- Zhipu's GLM-5 goes open-source, but core architecture claims await verification. DSA simultaneously promises lower cost, longer context, and better reasoning — three goals that typically conflict. Community reproduction will matter more than official benchmarks.

- LLM agents systematically favor certain sources when filtering information. CMU's controlled experiments across 12 models show source preference sometimes overrides content relevance, and explicit "stay neutral" prompts don't fix it.

- LoRA basis decomposition parameterizes visual transforms as a continuous space. NVIDIA's approach lets a single pair of example images specify arbitrary visual edits — no text prompt needed for effects that are hard to describe in words.

- Dual-path memory retrieval achieves state-of-the-art on long-term memory benchmarks. Mnemis layers hierarchical graph traversal on top of vector recall, directly useful for building conversational systems with persistent memory.

Featured

01 Architecture GLM-5 Goes Open-Source — Do the Claims Hold Up?

Zhipu branded GLM-5 with a catchy subtitle — "from vibe coding to agentic engineering" — but that's marketing positioning, not a technical breakthrough. Strip away the slogan, and two architecture choices deserve scrutiny.

First, DSA (a new architecture the paper doesn't fully detail) claims to simultaneously reduce training and inference cost while maintaining long-context capability. These three goals usually trade off against each other, and the abstract provides no concrete cost comparison numbers. Second, an asynchronous RL post-training infrastructure that decouples data generation from training, letting the model learn from longer-horizon agent interactions. The direction is sound — data generation is often the RL training bottleneck — but the claimed efficiency gains lack independent verification.

The abstract touts "SOTA on major open benchmarks," but self-reported numbers from a model publisher deserve skepticism until third-party reproduction arrives. The signal worth watching: code and weights are open-source, so community evaluation will come fast. That's where the real verdict on these architecture choices will land.

Key takeaways: - DSA claims lower cost and longer context simultaneously, but the abstract lacks concrete comparison data — wait for the full paper and independent tests - Asynchronous RL decoupling training from generation is a sound idea; the question is how much it actually helps on real agent tasks - Model is open-source, so community reproduction will matter more than official benchmarks

Source: GLM-5: from Vibe Coding to Agentic Engineering

02 Safety LLM Agents Play Favorites With Information Sources

LLM agents increasingly serve as information intermediaries — retrieving, filtering, synthesizing, and ultimately deciding what users see. CMU ran controlled experiments across 12 models from 6 providers and found that most models, when presented with source-labeled information, systematically favor certain publishers and platforms. Explicit "stay neutral" prompts don't eliminate it.

The deeper problem: source preference sometimes overrides content relevance. Which piece of information the model surfaces partly depends on whose name is attached to it. For products using LLMs for information retrieval, recommendations, or decision support, this means actively auditing for source preference — not assuming neutrality by default.

Key takeaways: - 12 mainstream models exhibit predictable source preferences that explicit prompting cannot eliminate - Source preference sometimes overrides content relevance, materially affecting what users see - Products using LLMs for information filtering need to add source bias to their audit checklist

Source: In Agents We Trust, but Who Do Agents Trust? Latent Source Preferences Steer LLM Generations

03 Image Gen One Image Pair Replaces a Thousand Words

"Turn this into a pencil sketch" is easy to say. But "that slightly faded look with film grain and cyan-shifted highlights" — text descriptions hit their ceiling fast. NVIDIA's LoRWeB does something elegant: it learns a set of LoRA basis modules, each representing a fundamental visual transformation, then linearly combines them to express arbitrary new transforms. Think of it as a continuous coordinate system for image editing.

At inference, you provide one example pair (image A → edited A'), a lightweight encoder locates the transformation direction in this coordinate space, and the same edit applies to any new image. Previous single fixed LoRAs struggled to cover diverse transformation types. Basis decomposition lets the model dynamically compose transforms it never saw during training, with generalization that clearly outperforms existing approaches.

For teams building image editing products, "steer edits with example pairs" is far more precise than text prompts for effects that resist verbal description. Worth watching as an interaction paradigm.

Key takeaways: - LoRA basis decomposition parameterizes the visual transformation space — a single model covers diverse transforms with generalization far beyond fixed LoRA approaches - The "example pair" paradigm handles visual effects that text prompts can't express, with strong product potential - Dynamic basis composition at inference eliminates retraining for each new transformation type

Source: Spanning the Visual Analogy Space with a Weight Basis of LoRAs

04 Retrieval Vector Search Is Fast but Not Smart

RAG's vector similarity retrieval is fundamentally a System 1 operation — fast surface-level semantic matching that falls apart on queries requiring multi-step reasoning. Mnemis adds a "slow thinking" path: it organizes memories into a hierarchical graph structure and traverses top-down for global filtering, covering logically related information that semantic similarity alone misses.

The two paths complement each other — one handles fast recall, the other handles structured reasoning. Results on long-term memory benchmarks are strong: 93.9 on LoCoMo, 91.6 on LongMemEval-S, both current best (using GPT-4.1-mini). For teams building conversational systems or personal assistants with persistent memory, this System 1 + System 2 dual-path architecture is a directly useful reference.

Key takeaways: - Vector similarity only covers surface semantics — a reasoning retrieval path is needed for multi-step memory associations - Hierarchical graph structure enables "slow thinking" retrieval without requiring a larger model - Teams building long-term memory LLM applications should study this dual-path design

Source: Mnemis: Dual-Route Retrieval on Hierarchical Graphs for Long-Term LLM Memory

Also Worth Noting

Today's Observation

Two papers from different angles point to the same underappreciated problem. The agent source preference study finds LLM agents systematically favor certain publishers and platforms when retrieving and synthesizing information. The visual persuasion study finds VLMs are systematically influenced by specific compositions and color tones in visual decision-making.

The common thread: models aren't giving "wrong" answers. They're applying hidden preferences during the filtering process — users receive results shaped by the model's taste while believing they're seeing objective, neutral information.

This differs from traditional AI bias. Traditional bias means skewed outputs toward demographic groups, detectable with fairness metrics. Here, the problem is that models acting as information filters have preferences about sources and visual features themselves — they're not discriminating against anyone, they're quietly injecting their own taste when picking things for you.

For teams deploying agents for information retrieval, recommendations, or procurement decisions: run a filtering preference audit before launch. Test text outputs with identical content under different source labels. Test visual decisions with controlled-variable product images. You're not auditing whether answers are accurate — you're auditing whether the filtering process itself has systematic leanings.