Today's Overview

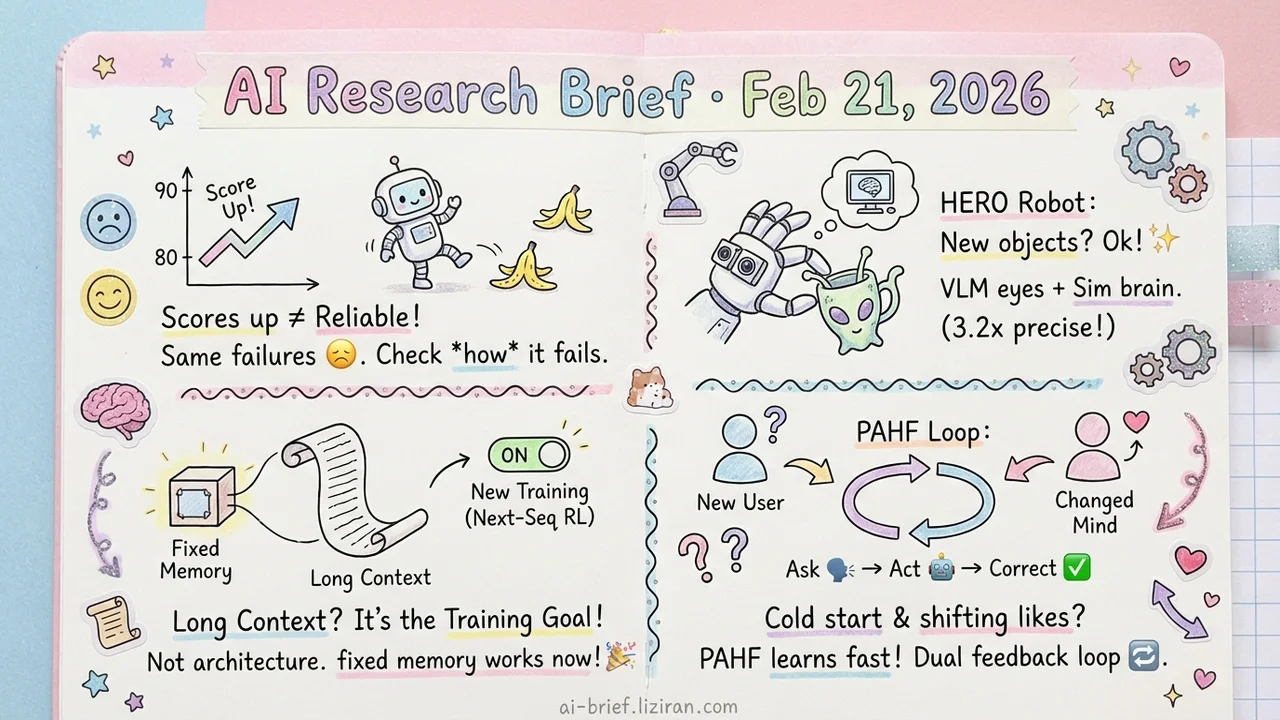

- Agents Scored 80→90, but Failure Modes Barely Changed. Testing 14 models shows capability gains don't translate to reliability gains. Demo-to-production decisions should hinge on failure conditions, not average accuracy.

- VLM + Sim RL Bypasses the Demonstration Data Bottleneck. HERO lets humanoid robots manipulate never-seen objects zero-shot, cutting end-effector tracking error by 3.2x.

- Fast Weight Long-Context Bottleneck Is the Training Objective, Not the Architecture. Switching to next-sequence prediction with RL makes fixed-memory models competitive on long-context tasks for the first time.

- Cold Start and Preference Drift, Solved in One Framework. Princeton's PAHF uses continual learning with dual feedback channels so agents keep up with shifting user preferences.

Featured

01 Agent Benchmarks Keep Climbing. Reliability Doesn't.

Agent benchmark scores go up every year. Teams that actually deploy agents to production know better: 85% accuracy doesn't mean reliable. This paper identifies a fundamental flaw in current evaluation. Single success-rate metrics compress consistency (are results stable across runs?), robustness (does a small input change break things?), predictability (do failures follow patterns?), and error severity (how bad is a failure?) into one number.

The authors borrow from safety-critical engineering to propose 12 metrics across four dimensions. Results from 14 models on two benchmarks aren't encouraging: recent capability improvements brought only marginal reliability gains. An agent jumping from 80% to 90% on a benchmark may fail in almost exactly the same ways, with the same consequences.

For teams pushing agents from demo to production, this reframes the evaluation question. Stop asking "what's the average success rate?" Start asking "under what conditions does it fail, and how bad is each failure?"

Key takeaways: - Single accuracy scores hide shortfalls in consistency, robustness, predictability, and safety - 14-model evaluation shows capability gains don't deliver proportional reliability gains - Demo-to-production decisions should weigh failure conditions and error severity, not averages

Source: Towards a Science of AI Agent Reliability

02 Robots Handle Unseen Objects Without More Demo Data

The biggest bottleneck in training robot manipulation isn't the control algorithm. It's the data. Real-world demonstrations don't scale. HERO takes a different approach: a VLM handles object recognition, sim-trained RL handles motor control, and the two combine modularly.

The core innovation is end-effector tracking. It fuses inverse kinematics with a learned neural forward model, plus goal adjustment and replanning. Tracking error drops 3.2x. The practical result: a humanoid walks into an office or coffee shop, encounters mugs, apples, and toys it has never seen, on tables ranging from 43cm to 92cm, and manipulates them reliably. This complements last week's Xiaomi Robotics-0 work on inference latency from a different angle: HERO solves the "never seen it before" generalization problem.

Key takeaways: - VLM + sim-to-real modular design bypasses the demonstration data bottleneck entirely - 3.2x reduction in end-effector tracking error is the precision breakthrough that makes this work - VLMs as the perception front-end for robots are becoming the default path for embodied intelligence

Source: Learning Humanoid End-Effector Control for Open-Vocabulary Visual Loco-Manipulation

03 Change the Training Objective, Fix Long-Context for Fixed-Memory Models

Fast weight architectures store context in fixed-size memory, which in theory suits long contexts perfectly: memory cost doesn't grow with sequence length. In practice, they've underperformed. The problem isn't the architecture. It's the training objective.

Standard next-token prediction (NTP) optimizes for one token at a time. This fragments the context stored in fast weights and loses cross-token semantic relationships. REFINE replaces NTP with next-sequence prediction: it selects key positions based on prediction entropy, generates multi-token sequences, then applies GRPO reinforcement learning for sequence-level optimization. On LaCT-760M and DeltaNet-1.3B, this consistently beats NTP fine-tuning baselines on needle-in-a-haystack retrieval and LongBench tasks.

The method works at mid-pretraining, post-training, and test-time training stages. Generality looks solid, though validation is limited to two models so far. Teams tracking long-context efficiency should watch for larger-scale results.

Key takeaways: - The long-context bottleneck for fast weight architectures lies in training objectives, not architectural design - Next-sequence prediction with RL gives fixed-memory models their first practical competitiveness on long-context tasks - Validated on two models only; larger-scale confirmation is still needed

Source: Reinforced Fast Weights with Next-Sequence Prediction

04 Your Agent's Memory Can't Keep Up with Changing Preferences

Anyone who's built an agent product knows the two persistent headaches: new users arrive with no history (cold start), and returning users change their minds but the model still runs on stale preferences (preference drift). Existing approaches either train implicit preference models from interaction history or encode user profiles into external memory. Each solves half the problem.

Princeton's PAHF proposes a continual learning framework with a three-step loop: proactively ask before acting to resolve ambiguity, decide based on stored preferences during execution, then update memory from post-action feedback. The key design is dual feedback channels. Pre-action clarification and post-action correction both feed into memory. Experiments show this learns faster than single-channel baselines and tracks preference changes over time.

The approach is sound, but it's only been tested on embodied manipulation and online shopping benchmarks. Real consumer scenarios will be significantly messier.

Key takeaways: - Cold start and preference drift are the two core bottlenecks for agent personalization; PAHF addresses both with continual learning - Dual feedback (pre-action questions + post-action corrections) significantly outperforms single-channel learning - Consumer-facing agent teams can reference this framework, but real-world complexity validation is still missing

Also Worth Noting

Today's Observation

Three unrelated papers today dissect the same underlying problem from different angles: agent "reliability" can't be captured in a single number.

The Agent Reliability paper splits operational reliability into four axes: consistency, robustness, predictability, and error severity. PAHF addresses temporal reliability: user preferences shift, and the agent must keep pace. The dynamic confidence scoring work in Also Worth Noting tackles evidential reliability: memory entries may be stale or contradictory, requiring real-time trust assessment.

This mirrors how software engineering matured. SLAs aren't a single number. They decompose into availability, latency, throughput, and consistency. Agent "quality" is undergoing the same decomposition: from "can it complete the task?" to "at what quality does it complete the task?" And "quality" itself splits into independently measurable, independently optimizable sub-problems.

If you're building an agent product, here's one concrete step. Stop fixating on end-to-end success rate. Monitor reliability across at least three independent dimensions: operational consistency (run the same input ten times, are results stable?), preference tracking accuracy (is the user correcting you less often over time?), and memory trustworthiness (how much retrieved context is stale?). Which dimension is the bottleneck determines an entirely different optimization strategy.