Today's Overview

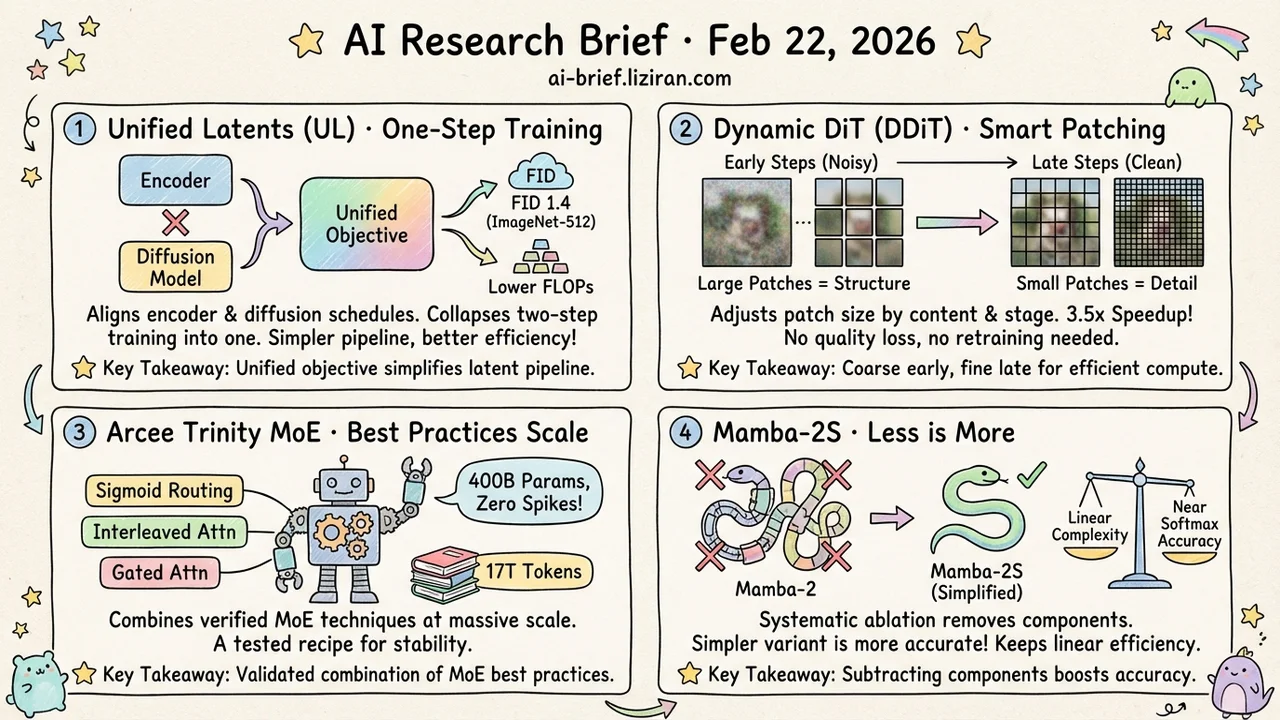

- Latent Diffusion's Two-Step Training Collapses Into One. Aligning encoder output noise with the diffusion schedule yields a unified objective. FID 1.4 on ImageNet-512 with lower training FLOPs.

- DiT Denoising Doesn't Need Fine-Grained Patches at Every Step. DDiT adjusts patch size by content complexity and denoising stage. 3.5x speedup, no quality loss, no retraining.

- A Full Validation of MoE Best Practices at Scale. Arcee Trinity combines sigmoid routing, interleaved attention, and gated attention in a 400B-parameter model trained on 17T tokens with zero loss spikes.

- Mamba-2 Gets More Accurate by Removing Components. Systematic ablation produces a simplified variant that nearly matches softmax attention while keeping linear complexity.

Featured

01 Unify the Encoder and Diffusion, Train Once

Standard latent diffusion trains the encoder/decoder first, then the diffusion model. Two stages, two separate objectives. Unified Latents (UL) aligns the encoder's output noise level with the diffusion prior's minimum noise level. That alignment yields a single training objective: a tight upper bound on latent bitrate.

FID 1.4 on ImageNet-512, reconstruction quality stays high, and training FLOPs drop below equivalent models trained on Stable Diffusion latents. On video, FVD 1.3 on Kinetics-600 sets a new record.

The real value isn't any single benchmark number. Collapsing "two-step separate training" into "one-step unified training" simplifies the entire pipeline. If this generalizes to video and 3D generation, the impact extends well beyond image metrics. More downstream validation is needed.

Key takeaways: - Unifies encoder and diffusion training into a single objective, simplifying the latent space pipeline - More efficient than equivalent models trained on Stable Diffusion latents - Sets a new video generation SOTA, but generalization to other modalities needs further validation

Source: Unified Latents (UL): How to Train Your Latents

02 DiT Doesn't Need Fine-Grained Patches at Every Step

Early denoising steps process near-pure noise. Using the smallest patches to model global structure pixel by pixel is like examining a blank wall through a magnifying glass. DDiT takes the obvious fix: large patches early to sketch structure, small patches later to refine detail. Granularity adapts to content complexity and denoising stage.

No retraining required. Drop it into any existing DiT. FLUX-1.Dev gets a 3.52x speedup; Wan 2.1, 3.2x. Generation quality and prompt adherence stay intact.

No architecture changes, no weight modifications — just a different tokenization strategy that eliminates most redundant computation. For video generation workloads, the cost savings compound fast.

Key takeaways: - Coarse patches early, fine patches late: allocates compute by need instead of uniformly - Plug-and-play on deployed DiT models, no retraining needed - 3x+ speedup on FLUX and Wan with no quality loss, especially valuable for video generation costs

Source: DDiT: Dynamic Patch Scheduling for Efficient Diffusion Transformers

03 MoE Best Practices, Validated at 400B Parameters

Individual MoE techniques are no longer scarce. Sigmoid routing, interleaved local/global attention, gated attention, depth-scaled sandwich norm — each backed by its own paper. Arcee Trinity's contribution is combining them all, then running the combination at three scales (6B/26B/400B total parameters, 1B to 13B active) across 17 trillion tokens. Zero loss spikes.

This isn't a single method breakthrough. It's a complete snapshot of current MoE best practices under real training conditions. Two details worth tracking: SMEBU (momentum-based expert bias updates with soft clamping) for load balancing, and the choice of Muon as optimizer.

For teams making architecture decisions right now, this technical report answers the question individual papers can't: "Do these things actually work together?"

Key takeaways: - Sigmoid routing + interleaved attention + gated attention validated across 17T tokens at three scales, zero loss spikes - Not a single-method innovation — a tested combination of current MoE best practices - Teams choosing architectures can reference this as a verified recipe

Source: Arcee Trinity Large Technical Report

04 Mamba Gets Better by Removing Parts

When linear attention falls short, the instinct is to add: more complex gating, more attention heads, hybrid architectures. 2Mamba2Furious goes the other direction. It systematically strips components from Mamba-2, testing what actually matters for accuracy.

The surprise: the simplified Mamba-2S performs better, not worse. Improving the A-mask and increasing hidden state order nearly closes the gap with softmax attention, while retaining linear complexity's memory efficiency on long sequences. The authors also found configurations where Mamba exceeds softmax attention accuracy.

Key takeaways: - Subtracting from Mamba-2 rather than adding to it brings accuracy closer to softmax attention - A-mask improvements and higher hidden state order are the key factors; not every component earns its keep - Finding the right minimal recipe beats stacking patches on the efficiency-accuracy tradeoff

Source: 2Mamba2Furious: Linear in Complexity, Competitive in Accuracy

Also Worth Noting

Today's Observation

The three highest-ranked papers today do the same thing in three different parts of the pipeline: find a one-size-fits-all default and replace it with something adaptive.

UL discovers that separate latent spaces for encoder and diffusion are an unnecessary split. Align the noise levels and training becomes more efficient. DDiT discovers that minimum patch size at every denoising step is unnecessary uniformity. Adapt granularity by stage and content, and most computation vanishes. 2Mamba2Furious discovers that many Mamba-2 components are unnecessary complexity. Strip them out and accuracy goes up.

Same pattern: existing designs are full of "uniform processing" defaults that aren't optimized choices. They're whatever was convenient at implementation time. Replacing fixed granularity with adaptive granularity consistently reveals that most of the computation was redundant.

Audit the uniform-processing stages in your own pipeline: fixed token lengths, undifferentiated inference steps across stages, same batch configuration for all inputs. Ask whether each processing granularity is a validated optimum or just a default. The latter is where efficiency gains live.